昇思MindSpore入门--跑通DeepLabV3模型

昇思MindSpore入门--跑通DeepLabV3模型

作者:kaierlong |来源:开源博客

1、环境准备

注意事项:

先按照基于GPU服务器安装MindSpore 1.5.0(_https://my.oschina.net/kaierlong/blog/5375003_)搭建基础开发环境

1.1 克隆仓库并进入到本地 deeplabv3 目录

git clone https://gitee.com/mindspore/models.git mindspore_models

cd mindspore_models/official/cv/deeplabv3

可以使用 tree 查看 deeplabv3 目录结构,目录结构如下所示。

.

├── ascend310_infer

│ ├── build.sh

│ ├── CMakeLists.txt

│ ├── fusion_switch.cfg

│ ├── inc

│ │ └── utils.h

│ └── src

│ ├── main.cc

│ └── utils.cc

├── ascend310_quant_infer

│ ├── acc.py

│ ├── config.cfg

│ ├── export_bin.py

│ ├── fusion_switch.cfg

│ ├── inc

│ │ ├── model_process.h

│ │ ├── sample_process.h

│ │ └── utils.h

│ ├── post_quant.py

│ ├── run_quant_infer.sh

│ └── src

│ ├── acl.json

│ ├── build.sh

│ ├── CMakeLists.txt

│ ├── main.cpp

│ ├── model_process.cpp

│ ├── sample_process.cpp

│ └── utils.cpp

├── default_config.yaml

├── Dockerfile

├── eval.py

├── export.py

├── mindspore_hub_conf.py

├── model_utils

│ ├── config.py

│ ├── device_adapter.py

│ ├── __init__.py

│ ├── local_adapter.py

│ └── moxing_adapter.py

├── postprocess.py

├── README_CN.md

├── README.md

├── requirements.txt

├── scripts

│ ├── build_data.sh

│ ├── docker_start.sh

│ ├── run_distribute_train_s16_r1.sh

│ ├── run_distribute_train_s8_r1.sh

│ ├── run_distribute_train_s8_r2.sh

│ ├── run_eval_s16.sh

│ ├── run_eval_s8_multiscale_flip.sh

│ ├── run_eval_s8_multiscale.sh

│ ├── run_eval_s8.sh

│ ├── run_infer_310.sh

│ ├── run_standalone_train_cpu.sh

│ └── run_standalone_train.sh

├── src

│ ├── data

│ │ ├── build_seg_data.py

│ │ ├── dataset.py

│ │ ├── get_dataset_lst.py

│ │ └── __init__.py

│ ├── __init__.py

│ ├── loss

│ │ ├── __init__.py

│ │ └── loss.py

│ ├── nets

│ │ ├── deeplab_v3

│ │ │ ├── deeplab_v3.py

│ │ │ └── __init__.py

│ │ ├── __init__.py

│ │ └── net_factory.py

│ ├── tools

│ │ ├── get_multicards_json.py

│ │ └── __init__.py

│ └── utils

│ ├── __init__.py

│ └── learning_rates.py

└── train.py

15 directories, 64 files

1.2 准备开发环境

pip3 install -r requirements.txt

2、数据准备

2.1 下载数据集

数据集下载地址

- Pascal VOC数据集

- 主页地址:

http://host.robots.ox.ac.uk/pascal/VOC/voc2012/index.html - 下载地址:

http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCtrainval\_11-May-2012.tar - 语义边界数据集

- 主页地址 :

http://home.bharathh.info/pubs/codes/SBD/download.html - 下载地址 :

http://www.eecs.berkeley.edu/Research/Projects/CS/vision/grouping/semantic\_contours/benchmark.tgz

注意事项

- 如果使用wget下载速度慢,可以使用迅雷等下载工具下载完成后再上传到服务器。

2.1.1 创建原始数据保存目录,并下载数据集

mkdir raw_data && cd raw_data

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCtrainval_11-May-2012.tar

wget http://www.eecs.berkeley.edu/Research/Projects/CS/vision/grouping/semantic_contours/benchmark.tgz

2.1.2 检测数据集MD5(可跳过)

md5sum benchmark.tgz VOCtrainval_11-May-2012.tar

会输出如下内容

82b4d87ceb2ed10f6038a1cba92111cb benchmark.tgz

6cd6e144f989b92b3379bac3b3de84fd VOCtrainval_11-May-2012.tar

2.1.3 解压数据集

tar zxvf benchmark.tgz

tar xvf VOCtrainval_11-May-2012.tar

2.1.4 查看数据集目录结构

tree -d benchmark_RELEASE/

会输出如下内容

benchmark_RELEASE/

├── benchmark_code_RELEASE

│ ├── cp_src

│ └── demo

│ ├── datadir

│ │ ├── cls

│ │ ├── img

│ │ └── inst

│ ├── indir

│ └── outdir

└── dataset

├── cls

├── img

└── inst

tree -d VOCdevkit

会输出如下内容

VOCdevkit

└── VOC2012

├── Annotations

├── ImageSets

│ ├── Action

│ ├── Layout

│ ├── Main

│ └── Segmentation

├── JPEGImages

├── SegmentationClass

└── SegmentationObject

2.1.5 生成数据清单文件 raw_data下生成三个新文件voc_train_lst.txt,voc_val_lst.txt,vocaug_train_lst.txt

cd ..

python3 src/data/get_dataset_lst.py --data_dir ./raw_data

会输出如下内容

Data dir is: ./raw_data

converting voc color png to gray png ...

converting done.

generating voc train list success.

generating voc val list success.

converting sbd annotations to png ...

converting done

generating voc train aug list success.

2.1.6 将数据集转换为MindRecords

创建保存目录

mkdir vocaug_mindrecords

mkdir voctrain_mindrecords

mkdir vocval_mindrecords

转换 vocaug_train 数据

# 注意data_root和dst_path

python3 src/data/build_seg_data.py --data_root ./ --data_lst ./raw_data/vocaug_train_lst.txt --dst_path ./vocaug_mindrecords/mindrecord_ --num_shards 8 --shuffle True

会输出如下内容

number of samples: 10582

number of samples written: 1000

number of samples written: 2000

number of samples written: 3000

number of samples written: 4000

number of samples written: 5000

number of samples written: 6000

number of samples written: 7000

number of samples written: 8000

number of samples written: 9000

number of samples written: 10000

number of samples written: 10582

可以使用 tree vocaug_mindrecords/ ,查看转换后的数据目录,输出如下内容

vocaug_mindrecords/

├── mindrecord_0

├── mindrecord_0.db

├── mindrecord_1

├── mindrecord_1.db

├── mindrecord_2

├── mindrecord_2.db

├── mindrecord_3

├── mindrecord_3.db

├── mindrecord_4

├── mindrecord_4.db

├── mindrecord_5

├── mindrecord_5.db

├── mindrecord_6

├── mindrecord_6.db

├── mindrecord_7

└── mindrecord_7.db

依次转换 voc_train 和 voc_val 数据集

python3 src/data/build_seg_data.py --data_root ./ --data_lst ./raw_data/voc_train_lst.txt --dst_path ./voctrain_mindrecords/mindrecord_ --num_shards 8 --shuffle True

python3 src/data/build_seg_data.py --data_root ./ --data_lst ./raw_data/voc_val_lst.txt --dst_path ./vocval_mindrecords/mindrecord_ --num_shards 8 --shuffle True

3、模型训练

3.1 预训练模型下载

wget https://download.mindspore.cn/model_zoo/r1.2/resnet101_ascend_v120_imagenet2012_official_cv_bs32_acc78/resnet101_ascend_v120_imagenet2012_official_cv_bs32_acc78.ckpt

3.2 GPU训练支持

deeplabv3目前只支持CPU和Ascend,需要增加GPU支持。虽然笔者使用的机器有4张GPU,为保险起见,仅修改代码支持单机单卡GPU。

3.2.1 代码备份

cp train.py train.py.bak

cp default_config.yaml default_config.yaml.bak

3.2.2 代码修改

修改原 train.py 文件中109到113行为如下内容:

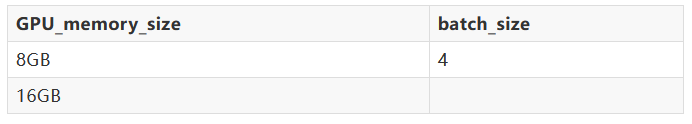

注意:读者需要根据GPU实际显存,调整max_device_memory参数。

if args.device_target == "CPU":

context.set_context(mode=context.GRAPH_MODE, save_graphs=False, device_target="CPU")

elif args.device_target == "GPU":

context.set_context(mode=context.GRAPH_MODE, save_graphs=False,

device_target="GPU", device_id=get_device_id(),

max_device_memory="16GB")

else:

context.set_context(mode=context.GRAPH_MODE, save_graphs=False,

device_target="Ascend", device_id=get_device_id())

修改原 train.py 文件中192行为如下内容:

if args.device_target == "Ascend":

amp_level = "O3"

else: # CPU GPU

amp_level = "O0"

修改原 train.py 中205行味如下内容:

model.train(args.train_epochs, dataset, callbacks=cbs, dataset_sink_mode=(args.device_target == "Ascend"))

修改原 default_config.yaml 中第11行内容为

device_target: "GPU" # ['Ascend', 'CPU', 'GPU']

3.3 使用VOCaug数据集训练s16,微调ResNet-101预训练模型

注意:

- --data_file

- If dataset_file is a str, it represents for a file name of one component of a mindrecord source, other files with identical source in the same path will be found and loaded automatically.

- 读者需要根据实际显存情况调整batch_size。具体大小可参考如下。

步骤如下

进入 deeplabv3 项目根目录,创建 ckpt 文件夹用来保存模型参数。

mkdir ms_log

mkdir -p s16_aug_train_1g/ckpt

设置指定GPU可见

单卡GPU机器可跳过本步骤

export CUDA_VISIBLE_DEVICES=1

检测指定GPU是否生效

echo $CUDA_VISIBLE_DEVICES

会输出如下内容

1

使用如下命令进行GPU训练

nohup python3 train.py --train_dir s16_aug_train_1g/ckpt --data_file ./vocaug_mindrecords/mindrecord_0 --device_target GPU --train_epochs=100 --batch_size=16 --crop_size=513 --base_lr=0.015 --lr_type=cos --min_scale=0.5 --max_scale=2.0 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s16 --ckpt_pre_trained=./resnet101_ascend_v120_imagenet2012_official_cv_bs32_acc78.ckpt --save_steps=1500 --keep_checkpoint_max=20 > ms_log/s16_aug_train_1g.log 2>&1 &

训练过程中,可使用如下命令检测GPU使用情况

watch -n 0.1 -d nvidia-smi

下图为笔者截取了20s左右时间的GPU使用情况。

图 GPU使用情况

3.4 使用VOCaug数据集训练s8,微调上一步的模型

注意:

- ckpt_pre_trained需要替换为上一步训练的ckpt文件地址

- 例如:

./s16_aug_train_1g/ckpt/deeplab_v3_s16-7_534.ckpt

步骤如下

mkdir -p s8_aug_train_1g/ckpt

nohup python3 train.py --train_dir=s8_aug_train_1g/ckpt --data_file=./vocaug_mindrecords/mindrecord_0 --device_target=GPU --train_epochs=200 --batch_size=8 --crop_size=513 --base_lr=0.02 --lr_type=cos --min_scale=0.5 --max_scale=2.0 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s8 --loss_scale=2048 --ckpt_pre_trained=./s16_aug_train_1g/ckpt/deeplab_v3_s16-200_661.ckpt --save_steps=1000 --keep_checkpoint_max=20 > ms_log/s8_aug_train_1g.log 2>&1 &

3.5 使用VOCtrain数据集训练s8,微调上一步的模型

注意:

- ckpt_pre_trained需要替换为上一步训练的ckpt文件地址

- 例如:

./s8_aug_train_1g/ckpt/deeplab_v3_s8-7_534.ckpt

步骤如下

mkdir -p s8_voc_train_1g/ckpt

nohup python3 train.py --train_dir=s8_voc_train_1g/ckpt --data_file=./voctrain_mindrecords/mindrecord_0 --device_target GPU --train_epochs=200 --batch_size=8 --crop_size=513 --base_lr=0.008 --lr_type=cos --min_scale=0.5 --max_scale=2.0 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s8 --loss_scale=2048 --ckpt_pre_trained=s8_aug_train_1g/ckpt/deeplab_v3_s8-200_1322.ckpt --save_steps=50 --keep_checkpoint_max=200 > ms_log/s8_voc_train_1g.log 2>&1 &

4、模型评估

4.1 使用voc val数据集评估s16

评估命令如下

nohup python3 eval.py --data_root=./ --data_lst=./raw_data/voc_val_lst.txt --batch_size=8 --crop_size=513 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s16 --scales_type=0 --freeze_bn=True --ckpt_path=./s16_aug_train_1g/ckpt/deeplab_v3_s16-200_661.ckpt > ms_log/eval_s16.log 2>&1 &

可以使用 tail -n 132 ms_log/eval_s16.log 查看结果

4.2 使用voc val数据集评估s8

评估命令如下

nohup python3 eval.py --data_root=./ --data_lst=./raw_data/voc_val_lst.txt --batch_size=8 --crop_size=513 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s8 --scales_type=0 --freeze_bn=True --ckpt_path=./s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt > ms_log/eval_s8.log 2>&1 &

可以使用 tail -n 132 ms_log/eval_s8.log 查看结果

4.3 使用voc val数据集评估多尺度s8

评估命令如下

nohup python3 eval.py --data_root=./ --data_lst=./raw_data/voc_val_lst.txt --batch_size=8 --crop_size=513 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s8 --scales_type=1 --freeze_bn=True --ckpt_path=./s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt > ms_log/eval_s8_multiscale.log 2>&1 &

可以使用

tail -n 132 ms_log/eval_s8_multiscale.log

查看结果

4.4 使用voc val数据集评估多尺度和翻转s8

评估命令如下

nohup python3 eval.py --data_root=./ --data_lst=./raw_data/voc_val_lst.txt --batch_size=8 --crop_size=513 --ignore_label=255 --num_classes=21 --model=deeplab_v3_s8 --scales_type=1 --flip=True --freeze_bn=True --ckpt_path=./s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt > ms_log/eval_s8_multiscale_flip.log 2>&1 &

可以使用

tail -n 132 ms_log/eval_s8_multiscale_flip.log

查看结果

5、线上推理

由于deeplabv3目前推理代码仅限Ascend硬件(笔者尝试修改代码进行GPU/CPU支持,都会有部分错误,如果后面有时间再详细debug。),所以我们需要开通一个Ascend服务器,并搭建相关环境进行后续步骤。

注意

- 由于 deeplabv3 数据较大,需要提前增加一块云硬盘并挂载到系统中,或者开通服务器时系统盘选择200G。

- 昇腾硬件平台开通及MindSpore环境搭建参考笔者文章

https://my.oschina.net/kaierlong/blog/5377351

云端数据下载

云端数据下载参考前面数据准备章节,仅需要下载完成数据并解压即可。注意需要提前将代码仓库clone到云端。

在云端,创建deeplabv3目录下创建 SegmentationClassGray 目录

mkdir -p raw_data/VOCdevkit/VOC2012/SegmentationClassGray

在本地,模型导出

注意将 ckpt_file 替换

python3 export.py --ckpt_file=./s16_aug_train_1g/ckpt/deeplab_v3_s16-200_661.ckpt --file_name=deeplab_v3_s16_200_661 --file_format=MINDIR --export_model=deeplab_v3_s16 --device_target=GPU

python3 export.py --ckpt_file=./s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt --file_name=deeplab_v3_s8_200_183 --file_format=MINDIR --export_model=deeplab_v3_s8 --device_target=GPU

在本地,数据上传

注意将 s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt 替换

remote_ip="your_server_ip"

scp *.mindir root@${remote_ip}:/root/codes/models/official/cv/deeplabv3/

scp s8_voc_train_1g/ckpt/deeplab_v3_s8-200_183.ckpt root@${remote_ip}:/root/codes/models/official/cv/deeplabv3/

scp raw_data/*.txt root@${remote_ip}:/root/codes/models/official/cv/deeplabv3/raw_data/

scp -r raw_data/VOCdevkit/VOC2012/SegmentationClassGray/* root@${remote_ip}:/root/codes/models/official/cv/deeplabv3/raw_data/VOCdevkit/VOC2012/SegmentationClassGray/

在云端,模型推理

cd /root/codes/models/official/cv/deeplabv3/

mkdir ascend310_infer_result && cd ascend310_infer_result

cp ../scripts/run_infer_310.sh ./

chmod a+x run_infer_310.sh

nohup ./run_infer_310.sh ../deeplab_v3_s8_200_183.mindir ./ ../ ../raw_data/voc_val_lst.txt 0 &

可以使用 cat acc.log 命令查看推理结果

在云端,量化推理

这里因为软件限制,无法使用 amct_mindspore ,故而将 post_quant.py 步骤进行替换。

cd /root/codes/models/official/cv/deeplabv3/ascend310_quant_infer

nohup python3 export_bin.py --model=deeplab_v3_s8 --data_root=../raw_data --data_lst=../raw_data/voc_val_lst.txt > data.log 2>&1 &

chmod a+x run_quant_infer.sh

# post_quant.py步骤替换为export.py

# python3 post_quant.py --model=deeplab_v3_s8 --data_root=../raw_data --data_lst=../raw_data/voc_val_lst.txt --ckpt_file=../deeplab_v3_s8-200_183.ckpt

python3 export.py --ckpt_file=deeplab_v3_s8-200_183.ckpt --file_name=deeplab_v3_s8_200_183 --file_format=AIR --export_model=deeplab_v3_s8 --device_target=Ascend

nohup ./run_quant_infer.sh ../deeplab_v3_s8_200_183.air ./data/00_data/ ./data/01_label ./data/shape.npy &

可以使用 cat acc.log 命令查看推理结果

6、问题

笔者遇到的问题及解决思路

问题1

dataset_sink_mode问题

Traceback (most recent call last):

File "train.py", line 213, in

train()

File "/mnt/data_0301_12t/xingchaolong/home/codes/gitee/mindspore_models/official/cv/deeplabv3/model_utils/moxing_adapter.py", line 105, in wrapped_func

run_func(*args, **kwargs)

File "train.py", line 209, in train

model.train(args.train_epochs, dataset, callbacks=cbs, dataset_sink_mode=(args.device_target != "CPU"))

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 722, in train

self._train(epoch,

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 504, in _train

self._train_dataset_sink_process(epoch, train_dataset, list_callback, cb_params, sink_size)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 566, in _train_dataset_sink_process

outputs = self._train_network(*inputs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/nn/cell.py", line 404, in __call__

out = self.compile_and_run(*inputs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/nn/cell.py", line 682, in compile_and_run

self.compile(*inputs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/nn/cell.py", line 669, in compile

_cell_graph_executor.compile(self, *inputs, phase=self.phase, auto_parallel_mode=self._auto_parallel_mode)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/common/api.py", line 548, in compile

result = self._graph_executor.compile(obj, args_list, phase, use_vm, self.queue_name)

TypeError: mindspore/ccsrc/runtime/device/gpu/kernel_info_setter.cc:355 PrintUnsupportedTypeException] Select GPU kernel op[SoftmaxCrossEntropyWithLogits] fail! Incompatible data type!

The supported data types are in[float32 float32], out[float32 float32]; , but get in [float16 float16 ] out [float16 float16 ]

问题2

GPU显存不足问题

[ERROR] RUNTIME_FRAMEWORK(1715024,7f1962ffd700,python3):2021-12-10-11:06:52.083.474 [mindspore/ccsrc/runtime/framework/actor/memory_manager_actor.cc:182] SetOpContextMemoryAllocFail] Device(id:0) memory isn't enough and alloc failed, kernel name: Default/network-TrainOneStepCell/network-BuildTrainNetwork/network-DeepLabV3/resnet-Resnet/layer3-SequentialCell/0-Bottleneck/bn3-BatchNorm2d/BatchNorm-op4408, alloc size: 142737408B.

[EXCEPTION] VM(1715024,7f1ab171c740,python3):2021-12-10-11:06:52.083.822 [mindspore/ccsrc/vm/backend.cc:835] RunGraph] The actor runs failed, actor name: kernel_graph_1

load_model ./resnet101_ascend_v120_imagenet2012_official_cv_bs32_acc78.ckpt success

Traceback (most recent call last):

File "train.py", line 221, in

train()

File "/mnt/data_0301_12t/xingchaolong/home/codes/gitee/mindspore_models/official/cv/deeplabv3/model_utils/moxing_adapter.py", line 105, in wrapped_func

run_func(*args, **kwargs)

File "train.py", line 217, in train

model.train(args.train_epochs, dataset, callbacks=cbs, dataset_sink_mode=(args.device_target != "CPU"))

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 722, in train

self._train(epoch,

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 504, in _train

self._train_dataset_sink_process(epoch, train_dataset, list_callback, cb_params, sink_size)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/train/model.py", line 566, in _train_dataset_sink_process

outputs = self._train_network(*inputs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/nn/cell.py", line 404, in __call__

out = self.compile_and_run(*inputs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/nn/cell.py", line 698, in compile_and_run

return _cell_graph_executor(self, *new_inputs, phase=self.phase)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/common/api.py", line 627, in __call__

return self.run(obj, *args, phase=phase)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/common/api.py", line 655, in run

return self._exec_pip(obj, *args, phase=phase_real)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/common/api.py", line 78, in wrapper

results = fn(*arg, **kwargs)

File "/mnt/data_0301_12t/xingchaolong/home/pyenvs/env_mindspore_1.5.0/lib/python3.8/site-packages/mindspore/common/api.py", line 638, in _exec_pip

return self._graph_executor(args_list, phase)

RuntimeError: mindspore/ccsrc/vm/backend.cc:835 RunGraph] The actor runs failed, actor name: kernel_graph_1

问题3

sudo apt install libgl1-mesa-glx

Traceback (most recent call last):

File "../postprocess.py", line 20, in

import cv2

File "/root/pyenvs/env_mindspore_ascend_1.5.0/lib/python3.7/site-packages/cv2/__init__.py", line 8, in

from .cv2 import *

ImportError: libGL.so.1: cannot open shared object file: No such file or directory

参考资料:

[1] https://gitee.com/mindspore/models/tree/master/official/cv/deeplabv3

欢迎投稿

欢迎大家踊跃投稿,有想投稿技术干货、项目经验等分享的同学,可以添加MindSpore官方小助手:小猫子(mindspore0328)的微信,告诉猫哥哦!

昇思MindSpore官方交流QQ群 : 486831414**(群里有很多技术大咖助力答疑!)**

官方QQ群号 : 486831414

微信小助手:mindspore0328

扫描下方二维码加入MindSpore项目

GitHub : https://github.com/mindspore-ai/mindspore