MindSpore学习系列笔记:动手学MindSpore强化学习(一)

MindSpore学习系列笔记:动手学MindSpore强化学习(一)

MindSpore学习系列笔记:动手学MindSpore强化学习

DQN算法

简介

在之前学习的Q-learning算法中,我们以矩阵的方式建立了一张存储每个状态下所有动作$Q$值的表格。表格中的每一个动作价值$Q(s,a)$估计的是在状态$s$下选择动作$a$然后继续遵循某一策略预期能够得到的期望回报。然而这种用表格存储动作价值只能适用于环境的状态和动作是离散的,并且空间都比较小的时候。我们之前代码实战的几个环境都是如此,例如Cliff Walking。但是,当状态或者动作数量非常大的时候,这就并不适用了。例如,当状态是一个RGB图像时,假设图像大小是$210\times160\times3$,此时状态的数量一共有$256^{(210\times 60\times 3)}$种,在计算机种存储这个数量级的Q表格是不现实的。更甚者,当状态或者动作是连续的时候,就有无限个状态动作对,我们更加无法使用这种表格的形式来记录各个状态动作对的Q值。面对这种情况,我们需要用函数拟合的方法来进行估计。我们今天要介绍的DQN算法便可以用来解决连续状态下离散动作的问题。

DQN代码实践

接下来,我们就开始正式进入DQN算法的代码实践环节,我们采用的测试环境CartPole-v0的状态空间相对简单,只有4个变量,因此我们的网络结构设计也相对简单。采用一层128个神经元的全连接并以ReLU作为激活函数。当遇到更复杂的诸如以图像作为输入的环境时,我们可以考虑采用深度卷积网络。

从DQN算法开始,我们将会使用到rl_utils库,它包含了一些专门为Hands-on RL准备的一些函数,例如绘制移动平均曲线、计算优势函数等,方便不同算法一起使用这些函数。

import random

import gym

import numpy as np

import collections

from tqdm import tqdm

import mindspore as ms

import matplotlib.pyplot as plt

import rl_utils

[ERROR] ME(14136:139737443379008,MainProcess):2024-03-04-21:52:18.535.781 [mindspore/run_check/_check_version.py:230] Cuda ['10.1', '11.1', '11.6'] version(libcudart*.so need by mindspore-gpu) is not found. Please confirm that the path of cuda is set to the env LD_LIBRARY_PATH, or check whether the CUDA version in wheel package and the CUDA runtime in current device matches. Please refer to the installation guidelines: https://www.mindspore.cn/install

[ERROR] ME(14136:139737443379008,MainProcess):2024-03-04-21:52:18.558.430 [mindspore/run_check/_check_version.py:230] Cuda ['10.1', '11.1', '11.6'] version(libcudnn*.so need by mindspore-gpu) is not found. Please confirm that the path of cuda is set to the env LD_LIBRARY_PATH, or check whether the CUDA version in wheel package and the CUDA runtime in current device matches. Please refer to the installation guidelines: https://www.mindspore.cn/install

[WARNING] ME(14136:139737443379008,MainProcess):2024-03-04-21:52:18.560.902 [mindspore/run_check/_check_version.py:98] Can not found cuda libs. Please confirm that the correct cuda version has been installed. Refer to the installation guidelines: https://www.mindspore.cn/install

from mindspore import ops, nn

我们首先定义经验回放池的类,主要包括加入数据、采样数据两大函数。

class ReplayBuffer:

''' 经验回放池 '''

def __init__(self, capacity):

self.buffer = collections.deque(maxlen=capacity) # 队列,先进先出

def add(self, state, action, reward, next_state, done):

self.buffer.append((state, action, reward, next_state, done)) # 将数据加入buffer

def sample(self, batch_size): # 从buffer中采样数据,数量为batch_size

transitions = random.sample(self.buffer, batch_size)

state, action, reward, next_state, done = zip(*transitions)

return np.array(state), action, reward, np.array(next_state), done

def size(self): # 目前buffer中数据的数量

return len(self.buffer)

接下来是只有一层隐藏层的Q网络。

class Qnet(nn.Cell):

''' 一层隐层的Q网络 '''

def __init__(self, state_dim, hidden_dim, action_dim):

super(Qnet, self).__init__()

self.fc1 = nn.Dense(state_dim, hidden_dim)

self.fc2 = nn.Dense(hidden_dim, action_dim)

def construct(self, x):

x = ops.relu(self.fc1(x))

return self.fc2(x)

然后进入正题,开始我们的DQN算法代码。

device="cuda:0"

import mindspore as ms

from mindspore import nn, ops

from mindspore.ops import composite as C

from mindspore.ops import functional as F

class DQN:

''' DQN算法 '''

def __init__(self, state_dim, hidden_dim, action_dim, learning_rate, gamma, epsilon, target_update, device):

self.action_dim = action_dim

self.q_net = Qnet(state_dim, hidden_dim, self.action_dim) # Q网络

self.target_q_net = Qnet(state_dim, hidden_dim, self.action_dim) # 目标网络

self.optimizer = ms.nn.Adam(self.q_net.trainable_params(), learning_rate=learning_rate) # 使用Adam优化器

self.gamma = gamma # 折扣因子

self.epsilon = epsilon # epsilon-greedy

self.target_update = target_update # 目标网络更新频率

self.count = 0 # 计数器,记录更新次数

self.device = device # 设备

self.q_net.to_float(ms.float16)

self.target_q_net.to_float(ms.float16)

def take_action(self, state): # epsilon greedy策略采取动作

if np.random.random() < self.epsilon:

action = np.random.randint(self.action_dim)

else:

state = ms.Tensor([state], dtype=ms.float32)

action = self.q_net(state).argmax().asnumpy().item()

return action

def update(self, transition_dict):

states = ms.Tensor(transition_dict['states'], dtype=ms.float32)

actions = ms.Tensor(transition_dict['actions']).view(-1, 1)

rewards = ms.Tensor(transition_dict['rewards'], dtype=ms.float32).view(-1, 1)

next_states = ms.Tensor(transition_dict['next_states'], dtype=ms.float32)

dones = ms.Tensor(transition_dict['dones'], dtype=ms.float32).view(-1, 1)

def forward_pass(states, actions, rewards, next_states, dones):

q_values = ops.gather_elements(self.q_net(states),1, actions) # Q值

max_next_q_values = self.target_q_net(next_states).max(1)[0].view(-1, 1) # 下个状态的最大Q值

q_targets = rewards + self.gamma * max_next_q_values * (1 - dones) # TD目标

return ops.square(q_values - q_targets).mean() # 均方误差损失函数

grad_fn = ms.value_and_grad(forward_pass, None, self.optimizer.parameters)

loss, grads = grad_fn(states, actions, rewards, next_states, dones)

self.optimizer(grads)

if self.count % self.target_update == 0:

# 更新目标网络的参数

ms.load_param_into_net(self.target_q_net, self.q_net.parameters_dict())

self.count += 1

一切准备就绪,开始训练,并且查看结果。我们之后会将训练过程包装进rl_utils中,方便之后要学习的算法的代码实现。

lr = 2e-3

num_episodes = 500

hidden_dim = 128

gamma = 0.98

epsilon = 0.01

target_update = 10

buffer_size = 10000

minimal_size = 500

batch_size = 64

# device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu")

device = "cuda"

env_name = 'CartPole-v0'

env = gym.make(env_name)

random.seed(0)

np.random.seed(0)

env.seed(0)

# env.reset(seed=0)

# torch.manual_seed(0)

replay_buffer = ReplayBuffer(buffer_size)

state_dim = env.observation_space.shape[0]

action_dim = env.action_space.n

agent = DQN(state_dim, hidden_dim, action_dim, lr, gamma, epsilon, target_update, device)

return_list = []

for i in range(10):

with tqdm(total=int(num_episodes/10), desc='Iteration %d' % i) as pbar:

for i_episode in range(int(num_episodes/10)):

episode_return = 0

state = env.reset()

done = False

while not done:

action = agent.take_action(state)

next_state, reward, done, _ = env.step(action)

replay_buffer.add(state, action, reward, next_state, done)

state = next_state

episode_return += reward

if replay_buffer.size() > minimal_size: # 当buffer数据数量超过一定值后,才进行Q网络训练

b_s, b_a, b_r, b_ns, b_d = replay_buffer.sample(batch_size)

transition_dict = {'states': b_s, 'actions': b_a, 'next_states': b_ns, 'rewards': b_r, 'dones': b_d}

agent.update(transition_dict)

return_list.append(episode_return)

if (i_episode+1) % 10 == 0:

pbar.set_postfix({'episode': '%d' % (num_episodes/10 * i + i_episode+1), 'return': '%.3f' % np.mean(return_list[-10:])})

pbar.update(1)

Iteration 0: 100%|████| 50/50 [00:00<00:00, 82.47it/s, episode=50, return=9.300]

Iteration 1: 100%|███| 50/50 [00:03<00:00, 15.77it/s, episode=100, return=9.200]

Iteration 2: 100%|███| 50/50 [00:03<00:00, 14.54it/s, episode=150, return=9.600]

Iteration 3: 100%|███| 50/50 [00:03<00:00, 16.07it/s, episode=200, return=9.400]

Iteration 4: 100%|███| 50/50 [00:02<00:00, 17.03it/s, episode=250, return=9.400]

Iteration 5: 100%|███| 50/50 [00:02<00:00, 17.97it/s, episode=300, return=9.100]

Iteration 6: 100%|███| 50/50 [00:03<00:00, 15.10it/s, episode=350, return=9.500]

Iteration 7: 100%|███| 50/50 [00:03<00:00, 16.61it/s, episode=400, return=9.100]

Iteration 8: 100%|███| 50/50 [00:02<00:00, 17.63it/s, episode=450, return=9.600]

Iteration 9: 100%|███| 50/50 [00:03<00:00, 16.59it/s, episode=500, return=9.500]

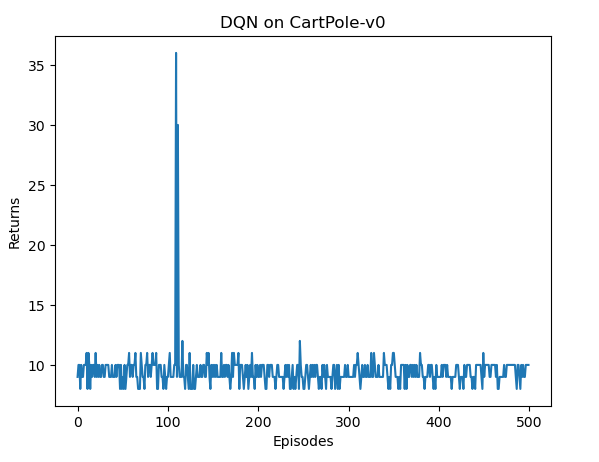

episodes_list = list(range(len(return_list)))

plt.plot(episodes_list,return_list)

plt.xlabel('Episodes')

plt.ylabel('Returns')

plt.title('DQN on {}'.format(env_name))

plt.show()

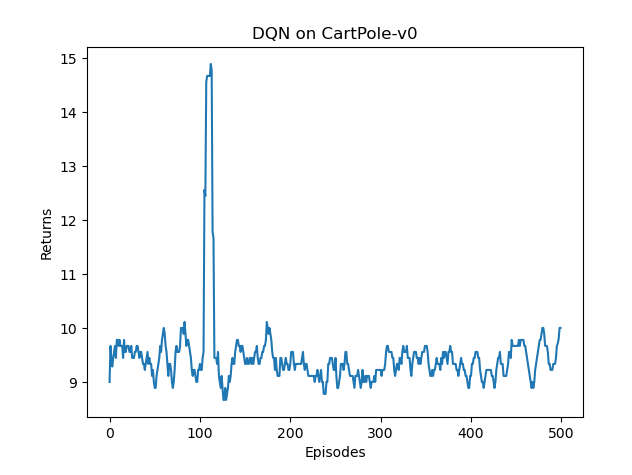

mv_return = rl_utils.moving_average(return_list, 9)

plt.plot(episodes_list, mv_return)

plt.xlabel('Episodes')

plt.ylabel('Returns')

plt.title('DQN on {}'.format(env_name))

plt.show()

以图像为输入的DQN算法

在之前所有的强化学习环境中,我们使用的都是非图像的状态作为输入(比如上面环境中车的坐标、速度),但是在一些视频游戏过程中并不能直接获取这些状态信息,智能体能够直接获取的是屏幕中的图像,如果要让智能体和人一样玩游戏,我们需要让智能体学会以图像作为状态的决策。此时我们可以利用DQN算法,并且将卷积网络加入我们的网络结构以提取图像特征,最终实现以图像为输入的强化学习。以图像为输入的DQN算法代码,与以上代码不同之处主要在于$Q$网络的结构和数据输入,其他并无明显区别。通常会将最近几帧图像一起作为DQN网络的输入,不只使用一帧是为了感知环境的动态性。接下来我们看一下以图像为输入的DQN算法代码,但由于需要运行较久时间,我们在此便不展示训练结果。

class ConvolutionalQnet(nn.Cell):

''' 加入卷积层的Q网络 '''

def __init__(self, action_dim, in_channels=4):

super(ConvolutionalQnet, self).__init__()

self.conv1 = nn.Conv2d(in_channels, 32, kernel_size=8, stride=4)

self.conv2 = nn.Conv2d(32, 64, kernel_size=4, stride=2)

self.conv3 = nn.Conv2d(64, 64, kernel_size=3, stride=1)

self.fc4 = nn.Dense(7 * 7 * 64, 512)

self.head = nn.Dense(512, n_actions)

def forward(self, x):

x = x.float() / 255

x = ops.relu(self.conv1(x))

x = ops.relu(self.conv2(x))

x = ops.relu(self.conv3(x))

x = ops.relu(self.fc4(x.view(x.size(0), -1)))

return self.head(x)

总结

在本章节内容中,我们学习了DQN算法,主要思想是用一个神经网络来建模最优策略的$Q$函数,然后利用Q-learning的思想进行参数更新。在DQN中,为了训练的稳定性和高效性,引入了经验回放和目标网络两大模块,使得实际算法在应用时取得更好的效果。DQN作为深度强化学习的基础,掌握该算法才算是真正入门了深度强化学习,我们面前将有更多的深度强化学习算法等待我们探索。