Experience Java Simple Inference Demo

Linux x86 Java Whole Process Inference Application Data Preparation Beginner

Overview

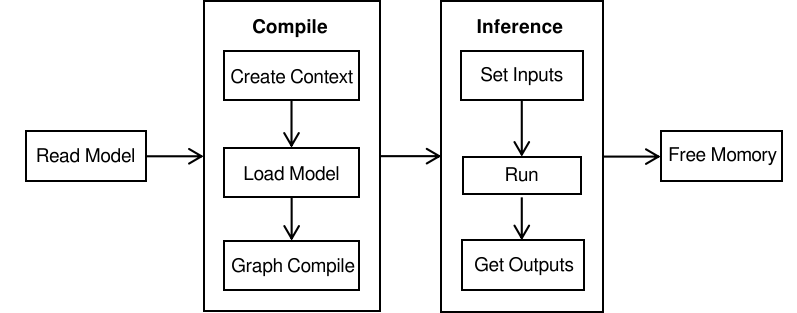

This tutorial provides an example program for MindSpore Lite to perform inference. It demonstrates the basic process of performing inference on the device side using MindSpore Lite Java API by random inputting data, executing inference, and printing the inference result. You can quickly understand how to use the Java APIs related to inference on MindSpore Lite. In this tutorial, the randomly generated data is used as the input data to perform the inference on the MobileNetV2 model and print the output data. The code is stored in the mindspore/lite/examples/quick_start_java directory.

The MindSpore Lite inference steps are as follows:

Load the model: Read the

.msmodel converted by the model conversion tool from the file system and import the model using the loadModel.Create and configure context: Create a configuration context MSConfig to save some basic configuration parameters required by a session to guide graph build and execution. including

deviceType(device type),threadNum(number of threads),cpuBindMode(CPU binding mode), andenable_float16(whether to preferentially use the float16 operator).Create a session: Create LiteSession and call the init method to configure the MSConfig obtained in the previous step in the session.

Build a graph: Before building a graph, the compileGraph interface of LiteSession needs to be called to build the graph, including subgraph partition and operator selection and scheduling. This takes a long time. Therefore, it is recommended that with one LiteSession created, one graph be built. In this case, the inference will be performed for multiple times.

Input data: Before the graph is executed, data needs to be filled in the

Input Tensor.Perform inference: Use the runGraph of the LiteSession to perform model inference. Obtain the output: After the graph execution is complete, you can obtain the inference result by

outputting the tensor.Release the memory: If the MindSpore Lite inference framework is not required, release the created LiteSession and Model.

To view the advanced usage of MindSpore Lite, see Using Runtime to Perform Inference (Java).

Building and Running

Environment requirements

Build

Run the build script in the

mindspore/lite/examples/quick_start_javadirectory to automatically download the MindSpore Lite inference framework library and model files and build the Demo.bash build.shIf the MindSpore Lite inference framework fails to be downloaded, manually download the MindSpore Lite model inference framework mindspore-lite-{version}-linux-x64.tar.gz whose hardware platform is CPU and operating system is Ubuntu-x64. Decompress the package and copy

runtime/lib/andruntime/third_party/allsoandjarfiles to themindspore/lite/examples/quick_start_java/libdirectory.If the MobileNetV2 model fails to be downloaded, manually download the model file mobilenetv2.ms and copy it to the

mindspore/lite/examples/quick_start_java/model/directory.After manually downloading and placing the file in the specified location, you need to execute the build.sh script again to complete the compilation.

Inference

After the build, go to the

mindspore/lite/examples/quick_start_java/targetdirectory and run the following command to experience MindSpore Lite inference on the MobileNetV2 model:export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:../lib/ java -Djava.library.path=../lib/ -classpath .:./quick_start_java.jar:../lib/mindspore-lite-java.jar com.mindspore.lite.demo.Main ../model/mobilenetv2.ms

After the execution, the following information is displayed, including the tensor name, tensor size, number of output tensors, and the first 50 pieces of data.

out tensor shape: [1,1000,] and out data: 5.4091015E-5 4.030303E-4 3.032344E-4 4.0029243E-4 2.2730739E-4 8.366581E-5 2.629827E-4 3.512394E-4 2.879536E-4 1.9557697E-4xxxxxxxxxx MindSpore Lite 1.1.0out tensor shape: [1,1000,] and out data: 5.4091015E-5 4.030303E-4 3.032344E-4 4.0029243E-4 2.2730739E-4 8.366581E-5 2.629827E-4 3.512394E-4 2.879536E-4 1.9557697E-4tensor name is:Default/Sigmoid-op204 tensor size is:2000 tensor elements num is:500output data is:3.31223e-05 1.99382e-05 3.01624e-05 0.000108345 1.19685e-05 4.25282e-06 0.00049955 0.000340809 0.00199094 0.000997094 0.00013585 1.57605e-05 4.34131e-05 1.56114e-05 0.000550819 2.9839e-05 4.70447e-06 6.91601e-06 0.000134483 2.06795e-06 4.11612e-05 2.4667e-05 7.26248e-06 2.37974e-05 0.000134513 0.00142482 0.00011707 0.000161848 0.000395011 3.01961e-05 3.95325e-05 3.12398e-06 3.57709e-05 1.36277e-06 1.01068e-05 0.000350805 5.09019e-05 0.000805241 6.60321e-05 2.13734e-05 9.88654e-05 2.1991e-06 3.24065e-05 3.9479e-05 4.45178e-05 0.00205024 0.000780899 2.0633e-05 1.89997e-05 0.00197261 0.000259391

Model Loading

Read the MindSpore Lite model from the file system and use the model.loadModel function to import the model for parsing.

boolean ret = model.loadModel(modelPath);

if (!ret) {

System.err.println("Load model failed, model path is " + modelPath);

return;

}

Model Build

Model build includes context configuration creation, session creation, and graph build.

private static boolean compile() {

MSConfig msConfig = new MSConfig();

// You can set config through Init Api or use the default parameters directly.

// The default parameter is that the backend type is DeviceType.DT_CPU, and the number of threads is 2.

boolean ret = msConfig.init(DeviceType.DT_CPU, 2);

if (!ret) {

System.err.println("Init context failed");

return false;

}

// Create the MindSpore lite session.

session = new LiteSession();

ret = session.init(msConfig);

msConfig.free();

if (!ret) {

System.err.println("Create session failed");

model.free();

return false;

}

// Compile graph.

ret = session.compileGraph(model);

if (!ret) {

System.err.println("Compile graph failed");

model.free();

return false;

}

return true;

}

Model Inference

Model inference includes data input, inference execution, and output obtaining. In this example, the input data is randomly generated, and the output result is printed after inference.

private static boolean run() {

MSTensor inputTensor = session.getInputsByTensorName("graph_input-173");

if (inputTensor.getDataType() != DataType.kNumberTypeFloat32) {

System.err.println("Input tensor shape do not float, the data type is " + inputTensor.getDataType());

return false;

}

// Generator Random Data.

int elementNums = inputTensor.elementsNum();

float[] randomData = generateArray(elementNums);

byte[] inputData = floatArrayToByteArray(randomData);

// Set Input Data.

inputTensor.setData(inputData);

// Run Inference.

boolean ret = session.runGraph();

if (!ret) {

System.err.println("MindSpore Lite run failed.");

return false;

}

// Get Output Tensor Data.

MSTensor outTensor = session.getOutputByTensorName("Softmax-65");

// Print out Tensor Data.

StringBuilder msgSb = new StringBuilder();

msgSb.append("out tensor shape: [");

int[] shape = outTensor.getShape();

for (int dim : shape) {

msgSb.append(dim).append(",");

}

msgSb.append("]");

if (outTensor.getDataType() != DataType.kNumberTypeFloat32) {

System.err.println("output tensor shape do not float, the data type is " + outTensor.getDataType());

return false;

}

float[] result = outTensor.getFloatData();

if (result == null) {

System.err.println("decodeBytes return null");

return false;

}

msgSb.append(" and out data:");

for (int i = 0; i < 10 && i < outTensor.elementsNum(); i++) {

msgSb.append(" ").append(result[i]);

}

System.out.println(msgSb.toString());

return true;

}

Memory Release

If the MindSpore Lite inference framework is not required, release the created LiteSession and Model.

// Delete session buffer.

session.free();

// Delete model buffer.

model.free();