Introduction || Quick Start || Tensor || Data Loading and Processing || Model || Autograd || Train || Save and Load || Accelerating with Static Graphs

Overview

The following describes the Huawei AI full-stack solution and the position of MindSpore in the solution. Developers who are interested in MindSpore can visit the MindSpore community and click Watch, Star, and Fork.

Introduction to MindSpore

Overall Architecture

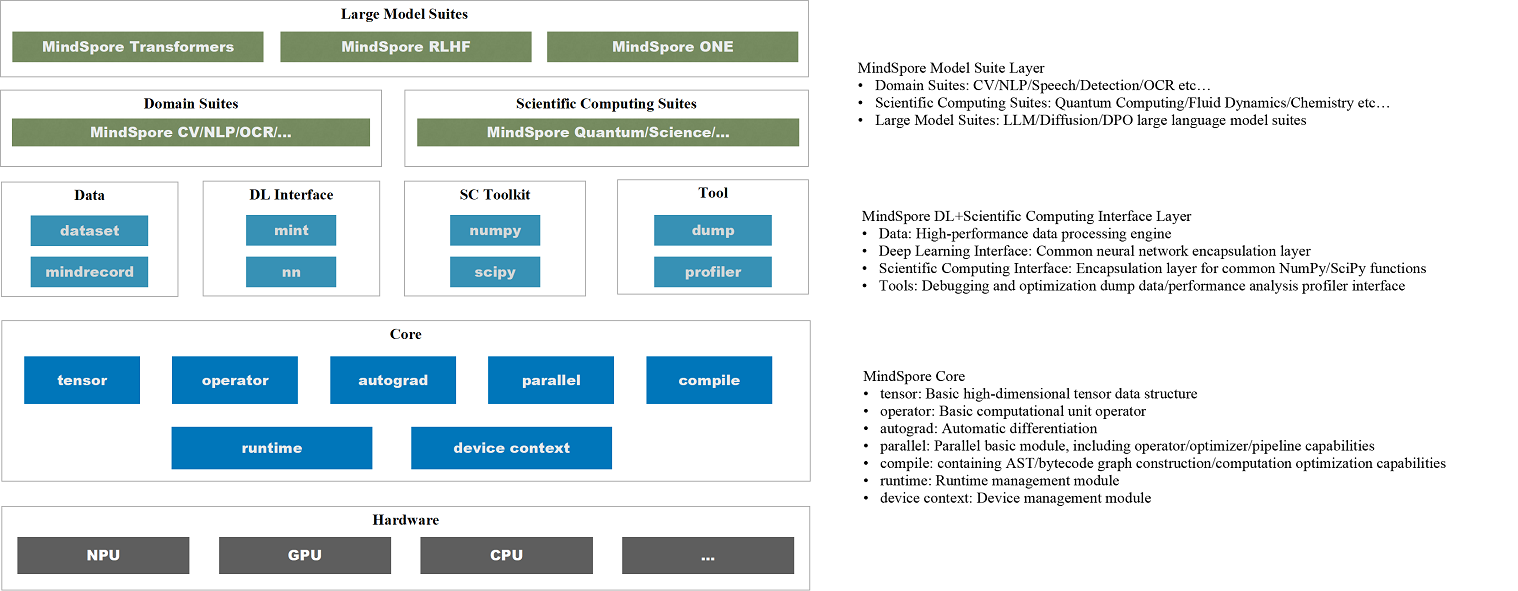

The overall architecture of MindSpore is as follows:

Model Suite: Provides developers with ready-to-use models and development kits, such as the large model suite MindSpore Transformers, MindSpore ONE, and scientific computing libraries for hot research areas;

Deep Learning + Scientific Computing: Provides developers with various Python interfaces required for AI model development, maximizing compatibility with developers' habits in the Python ecosystem;

Core: As the core of the AI framework, it builds the Tensor data structure, basic operation operators, autograd module for automatic differentiation, Parallel module for parallel computing, compile capabilities, and runtime management module.

Design Philosophy

MindSpore is a full-scenario deep learning framework designed to achieve three major goals: easy development, efficient execution, and unified deployment across all scenarios. Easy development is reflected in API friendliness and low debugging difficulty; efficient execution includes computational efficiency, data preprocessing efficiency, and distributed training efficiency; full-scenario means the framework simultaneously supports cloud, edge, and device-side scenarios.

Introduction to Huawei Ascend AI Full-Stack Solution

Ascend computing is a full-stack AI computing infrastructure and application based on the Ascend series processors. It includes the Ascend series chips, Atlas series hardware, CANN chip enablement, MindSpore AI framework, ModelArts, and MindX application enablement.

Huawei Atlas AI computing solution is based on Ascend series AI processors and uses various product forms such as modules, cards, edge stations, servers, and clusters to build an all-scenario AI infrastructure solution oriented to device, edge, and cloud. It covers data center and intelligent edge solutions, as well as the entire inference and training processes in the deep learning field.

The Ascend AI full stack is shown below:

The functions of each module are described as follows:

Ascend Application Enablement: AI platform or service capabilities provided by Huawei major product lines based on MindSpore.

MindSpore: Support for device-edge-cloud-independent and collaborative unified training and inference frameworks.

CANN: A driver layer that enables Ascend chips.

Compute Resources: Ascend serialized IP, chips and servers.

For details, click Huawei Ascend official website.

Joining the Community

Welcome every developer to the MindSpore community and contribute to this all-scenario AI framework.

MindSpore official website: provides comprehensive MindSpore information, including installation, tutorials, documents, community, resources, and news (learn more).

MindSpore code:

MindSpore AtomGit: You can track the latest progress of MindSpore by clicking Watch, Star, and Fork, discuss issues, and commit code.

MindSpore GitHub: MindSpore code image of AtomGit. Developers who are accustomed to using GitHub can learn MindSpore and view the latest code implementation here.

MindSpore forum: We are dedicated to serving every developer. You can find your voice in MindSpore, regardless of whether you are an entry-level developer or a master. Let's learn and grow together. (Learn more)