Multimodal Analogical Reasoning over Knowledge Graphs Based on MindSpore

Multimodal Analogical Reasoning over Knowledge Graphs Based on MindSpore

Conference

ICLR 2023

Paper URL

https://openreview.net/forum?id=NRHajbzg8y0P

Code URL

https://github.com/mindspore-lab/models/tree/master/research/ZJU/mkg_analogy

As an open-source AI framework, MindSpore brings excellent experience of device-edge-cloud synergy, simplified development, ultimate performance, and security and reliability for researchers and developers. It supported over 1000 AI conference papers published by universities and research institutions. College of Computer Science and Technology, Zhejiang University, published in ICLR. For more exciting paper reviews and open source code implementations, visit the Models repository.

01 Background

Analogical reasoning is the ability to perceive and utilize the relational similarity between two situations or events, playing a significant role in human cognition and various fields such as education and creativity. Researchers considered combining analogical reasoning with AI, which has been widely applied in computer vision (CV) and natural language processing (NLP). In CV, vision is combined with relationships, structures, and analogical reasoning to test understanding and reasoning abilities of models for basic graphics. In NLP, text analogical reasoning abilities of models are verified through linear word analogy. Most of the works follow the form of  to preliminarily analyze the analogical reasoning ability of deep learning models, but they are limited to single modalities, without considering whether neural networks can capture analogy information from different modalities. However, Mayer's cognitive theory points out that humans usually exhibit better analogical reasoning abilities in multimodal resources. So, do AI models possess this property?

to preliminarily analyze the analogical reasoning ability of deep learning models, but they are limited to single modalities, without considering whether neural networks can capture analogy information from different modalities. However, Mayer's cognitive theory points out that humans usually exhibit better analogical reasoning abilities in multimodal resources. So, do AI models possess this property?

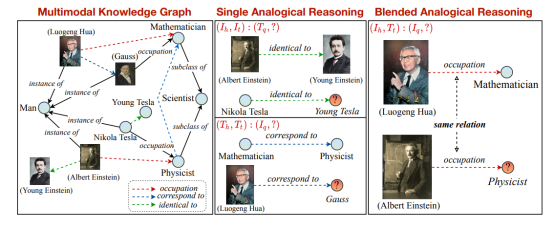

This paper proposes a multimodal analogical reasoning task based on knowledge graphs, which can be formulated as  . The paper constructs a multimodal analogical reasoning dataset (MARS) and a multimodal knowledge graph dataset (MarKG) as support. To evaluate the multimodal analogical reasoning process, the paper conducts comprehensive experiments on multimodal knowledge graph embedding baselines and multimodal pre-trained Transformer baselines on MARS, guided by psychological theories. Furthermore, the paper proposes a novel multimodal analogical reasoning framework MarT, which can be inserted into any multimodal pre-trained Transformer model at any time and can produce better analogical reasoning performance.

. The paper constructs a multimodal analogical reasoning dataset (MARS) and a multimodal knowledge graph dataset (MarKG) as support. To evaluate the multimodal analogical reasoning process, the paper conducts comprehensive experiments on multimodal knowledge graph embedding baselines and multimodal pre-trained Transformer baselines on MARS, guided by psychological theories. Furthermore, the paper proposes a novel multimodal analogical reasoning framework MarT, which can be inserted into any multimodal pre-trained Transformer model at any time and can produce better analogical reasoning performance.

02 Team Introduction

Zhang Ningyu, associate professor at Zhejiang University, has published many papers in high-level international academic journals and conferences. His representative works include KnowPrompt, DeepKE, EasyEdit, and OceanGPT.

03 Paper Introduction

Figure 1

Figure 2

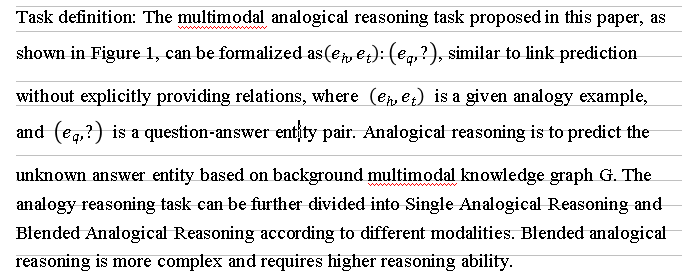

The construction process of the multimodal analogical reasoning dataset MARS and the underlying multimodal knowledge graph MarKG is shown in figure 2. First, seed entities and relations are collected from two text analogical reasoning datasets E-KAR and BATs. Then, these entities and relations are mapped to the large knowledge base Wikidata, unified, and standardized. After that, entity images are retrieved from the Google image search engine and multimodal data Laion-5B, and a series of measures are used to filter low-quality images. Finally, high-quality analogy data is retrieved to construct the MARS dataset.

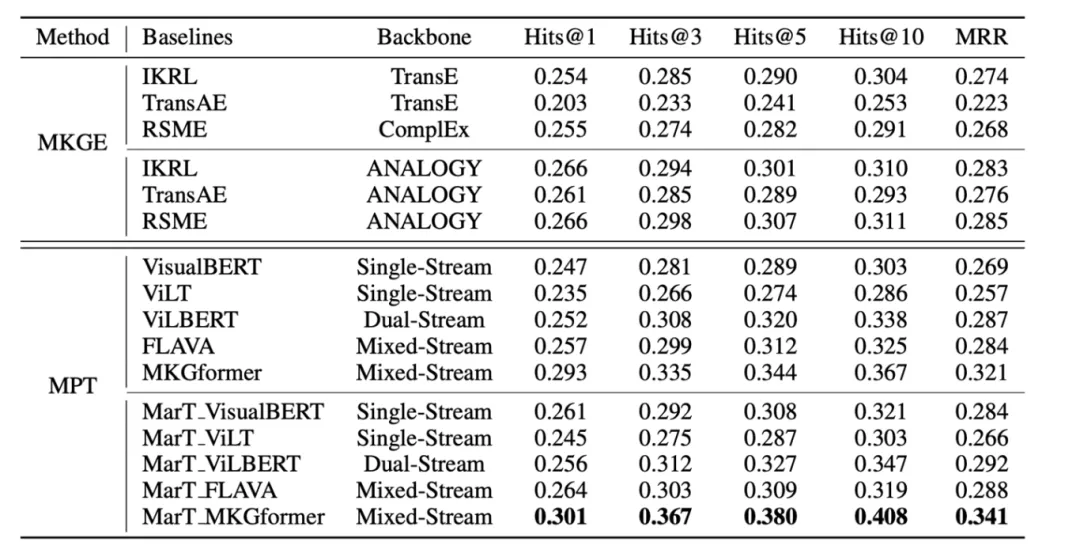

The paper tests some baseline models, including three multimodal knowledge graph embedding models (IKRL, TransAE, RSME) and five multimodal pre-trained models based on Transformers (VisualBERT, ViLT, ViLBERT, FLAVA, and MKGformer).

3.1 Multimodal Knowledge Graph Embedding Models

For multimodal knowledge graph embedding models, the paper adopts a pipeline approach to solve analogical reasoning, including three steps: abduction, mapping, and induction. Among them, abduction is used to predict the potential relationship of the analogy example  , mapping maps the predicted potential relationship to the analogy problem entities, and finally, induction predicts the final analogy answer entity.

, mapping maps the predicted potential relationship to the analogy problem entities, and finally, induction predicts the final analogy answer entity.

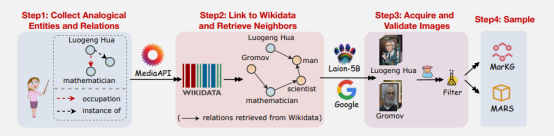

3.2 Multimodal Pre-Trained Models

The paper treats each entity and relation as a special token added to the vocabulary of pre-trained models and uses learnable vectors to represent them. Inspired by previous research, the paper designs a masked entity and relation modeling task similar to Masked Language Modeling (MLM) to learn these vectors, making them contain information about entities and relations. First, the multimodal Transformer model is pre-trained on the MarKG dataset. The MarKG dataset contains text description information, image information, and relation information between entities, where the model is expected to learn representations of entities and relations from these multi-source information. To this end, the paper designs a prompt template to let the model predict the entity or relationship corresponding to the [MASK] token. In addition, the paper also provides different modal information of entities for the model, including text descriptions and images.

After pre-training, the pre-trained Transformer model is applied to the downstream dataset MARS using analogy reasoning techniques with explicit structure mapping prompts. The input is divided into two parts, the part to the left of "||" corresponds to the abduction step in the pipeline approach, the part to the right of "||" corresponds to the induction step, while the mapping step is completed inside the model.

A special token [R] is used to represent the potential relation between analogy example entities, and the entity contained in the input  is replaced with the entity embedding learned during pre-training. Finally, the analogy answer entity is obtained by predicting the special token corresponding to [MASK] in the special token vocabulary space.

is replaced with the entity embedding learned during pre-training. Finally, the analogy answer entity is obtained by predicting the special token corresponding to [MASK] in the special token vocabulary space.

3.3 MART: A Multimodal Analogical Reasoning Framework with Transformer

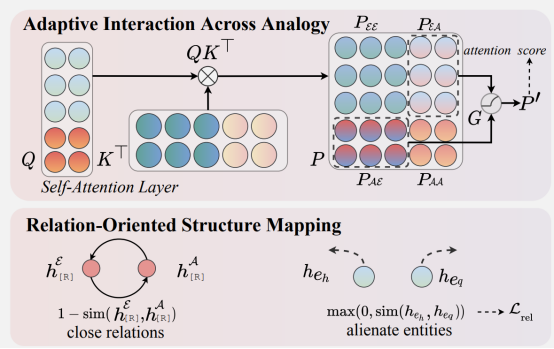

Although the above method allows pre-trained Transformer models to perform multimodal analogical reasoning tasks, it only shallowly considers the abduction and induction steps, ignoring the fine-grained association between analogy example and analogy problem-answer pair. Therefore, the paper further proposes the MarT framework for Transformer models, which includes two modules: adaptive interaction across analogy and relation-oriented structure mapping.

3.4 Adaptive Interaction Across Analogy

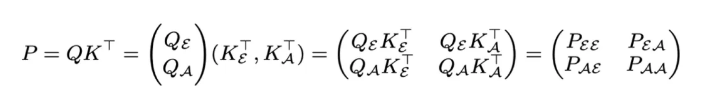

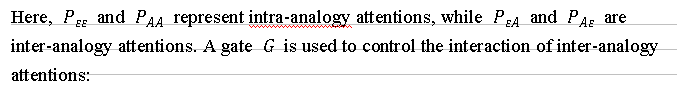

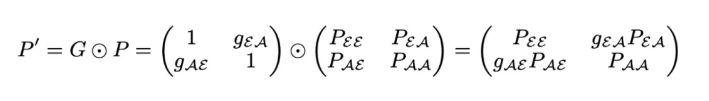

Previously, by designing an analogy prompt template, the analogy example and analogy problem-answer pair were concatenated and fed into the Transformer model, where the two parts interact to a certain extent during attention calculation. However, the analogy example is crucial for predicting analogy answers, but conversely, the analogy problem-answer pair may provide little help for modeling analogy examples. Moreover, the help provided by analogy examples varies in different cases. Therefore, the paper uses an adaptive association gate to adjust the degree of interaction between the two parts during attention calculation, breaking down the attention calculation process:

3.5 Relation-Oriented Structure Mapping

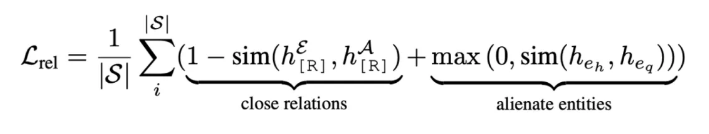

The structure mapping theory suggests that mapping relation structures is more important than surface similarity between objects in analogical reasoning. For example, a battery is analogous to a reservoir because they both store potential, not because they are both cylindrical. Inspired by this, this paper proposes the relaxation loss for analogical reasoning that encourages the model to focus on transferring relational structures:

Here, Her represents the hidden feature of the special token [R] in the MLM head for analogy examples, which calculates the cosine similarity.

04 Experiment Results

As shown in the table below, the MKGE and MPT methods achieved comparable performance on the MARS dataset. After analogy modules are added to the models, the performance improved significantly. Specifically, the MKGE method showed significant improvement in Hit and MRR metrics when using ANALOGY as the Backbone, and the MPT method showed a significant improvement after incorporating the MarT framework. MarT_MKGformer exhibited the best performance, possibly because MKGformer is designed for multimodal knowledge graph tasks and is more sensitive to such tasks. The paper provides a leaderboard at https://zjunlp.github.io/project/MKG\_Analogy/.

05 Summary and Prospects

Analogy is a fundamental ability of human intelligence and can be considered one of the sources of human intelligence to some extent. People understand and solve problems by comparing one concept or situation with another similar one. This method helps people understand abstract concepts through familiar ones and use their experiences in these concepts to solve problems. The paper proposes a multimodal analogical reasoning task based on knowledge graphs, formally defines this task, and provides a multimodal analogical reasoning dataset MARS and a multimodal knowledge graph dataset MarKG. Experiments on multiple knowledge graph embedding models and pre-trained Transformer models demonstrate the difficulty and potential of this task.

With the continuous development of AI and deep learning technologies, MindSpore, as an efficient, flexible, and powerful framework, shows broad application prospects. MindSpore features efficient computation acceleration that is deeply integrated with hardware, fully utilizing computing resources and significantly improving model training speed. In addition, automatic mixed precision of MindSpore selects appropriate numerical precision during training, reducing memory usage and improving computational efficiency. Looking forward, the MindSpore ecosystem is expected to continue expanding, covering more industry applications.