Achieving Llama 3.1-8B Adaptation and Open Source in 6 Hours! MindSpore Transformers Enables Innovation

Achieving Llama 3.1-8B Adaptation and Open Source in 6 Hours! MindSpore Transformers Enables Innovation

On July 24 (Beijing time), Meta officially released the Llama 3.1 open source large language model, including 8B, 70B, and 405B parameter versions. The Llama 3.1 405B model is comparable to closed source models such as GPT-4 and Claude3.5 in multiple benchmark tests. In addition, the Llama 3.1 model with 8B and 70B parameters is also competitive compared with other closed source and open source models with similar numbers of parameters.

01

Achieving Llama 3.1 adaptation in 6 hours

Using the MindSpore Transformers, developers implement Llama3.1-8b fine-tuning and inference migration and adaptation within 6 hours, and then upload the code to the open source platform Gitee for all developers to use (the code will be uploaded to other model communities later).

MindSpore Transformers Llama3.1 code repository address: https://gitee.com/mindspore/mindformers/blob/dev/research/llama3\_1 MindSpore code repository address: https://gitee.com/mindspore/mindspore In terms of development, by using the MindSpore Transformers, you can obtain Llama 3.1 through fine-tuning, inference and deployment on the out-of-the-box Llama3.

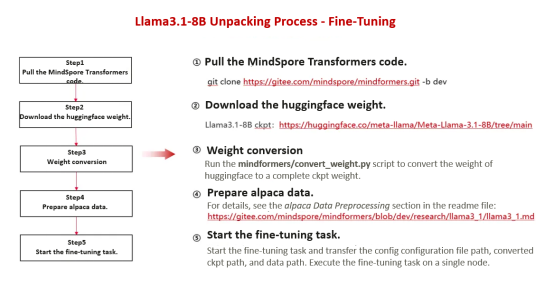

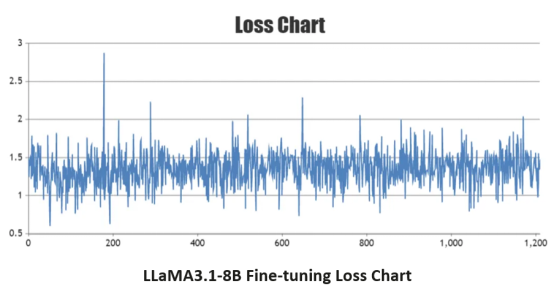

In terms of fine-tuning, developers use the weight conversion tool of the kit to implement one-click conversion of HuggingFace weights. In addition, developers use APIs such as Trainer provided by MindFormers to complete fine-tuning adaptation and training by modifying the configuration file, the loss curve indicates that the training task runs stably after fine-tuning.

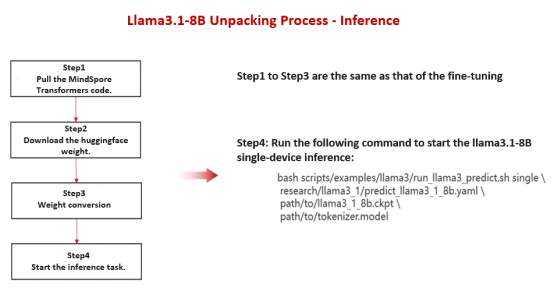

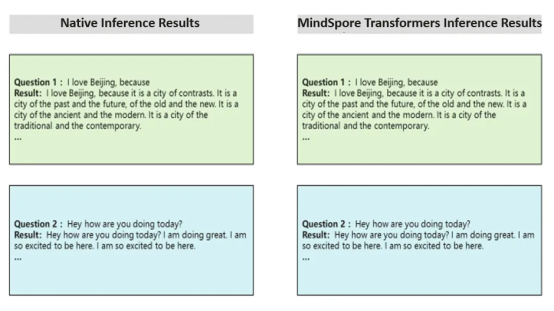

In terms of inference and deployment, the steps of pulling the code, downloading the weight and weight conversion are the same as that of fine-tuning. According to the inference result, the precision of the Llama 3.1 model is aligned with that of the native model.

MindSpore Transformers Llama3.1 Unpacking Process

Fine-Tuning:

When the model goes live, the loss curve shows that the fine-tuning task is running stably.

Inference:

Using MindSporeTransformers and Ascend AI processors, the inference result of Llama 3.1-8B is the same as that of the Llama 3.1 native model, thus completing precision alignment.

02

About MindSpore and MindSpore Transformers

Huawei's MindSpore is the first open source all-scenario AI computing framework in the industry. It supports AI foundation models and scientific intelligence. The built-in parallel technology and component-based design of MindSpore make MindSpore Transformers a full-process development kit for foundation model training, fine-tuning, evaluation, inference, and deployment. MindSpore Transformers supports mainstream Transformer pre-trained models and SOTA downstream task applications in the industry. It helps users easily implement foundation model training and innovative R&D.