Illumination-Aware Image Quality Assessment for Enhanced Low-Light Image

Illumination-Aware Image Quality Assessment for Enhanced Low-Light Image

1. Background

Images captured in a dark environment may suffer from low visibility or loss of significant details. Although existing low-illumination enhancement (LIE) algorithms can effectively improve the visual aesthetics of images, these algorithms may under- or over-expose the image and introduce visual artifacts. This indicates that it is important to perform image quality assessment (IQA) of enhanced low-light images. To reduce the overshoot effects of LIE, this paper proposes an illumination-aware image quality assessment, called LIE-IQA, for enhanced low-light images.

2. About the Team

The team is led by associate professor Liang Lingyu, recipient of the Chinese Association for Artificial Intelligence – Huawei MindSpore Academic Award Fund. During recent years, the team has been engaged in multiple research topics, such as interpretable machine learning and image and text recognition in complex scenarios.

3. Introduction

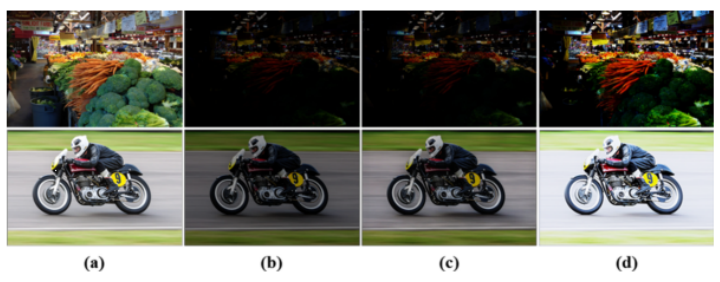

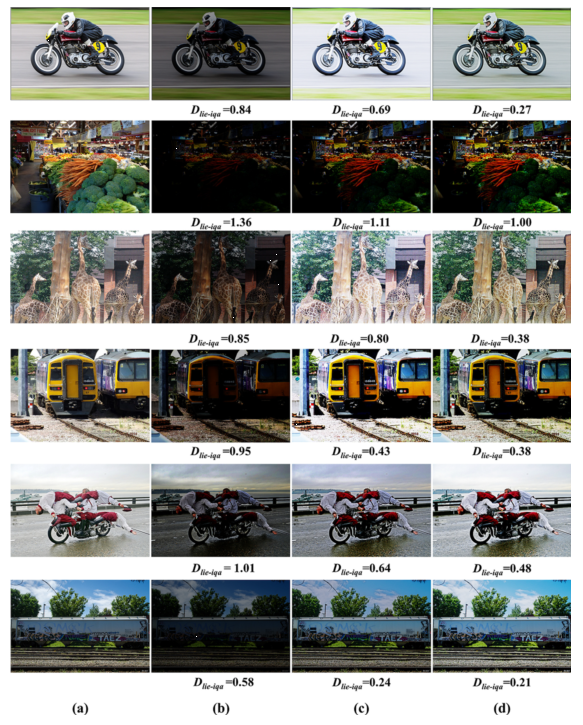

This paper analyzes under- and over-exposure and visual artifacts in enhanced low-light images, and proposes an illumination-aware image quality assessment, called LIE-IQA, to reduce the overshoot effects of low-light image enhancement, as shown in figure 1. LIE-IQA uses intrinsic image decomposition to extract the reflectance and shading components of both the enhanced low-light image and reference image, and then uses the weighted similarity between the VGG-based features of the enhanced low-light image and reference image to obtain the LIE-IQA score. Since the weight of the LIE-IQA metric can be learned from data, the proposed metric is adaptive. Qualitative and quantitative experiments show the superiority of LIE-IQA in image quality measurement. LIE-IQA can also be used as a regularization in a loss function to optimize an end-to-end LIE method. The results indicate the potential of the LIE-IQA optimization framework for reducing the overshoot effects of low-light enhancement.

Figure 1. Visual artifacts caused by undershoot/overshoot of two recent LIE methods. (a) Reference images (Ground-Truth). (b) Low-light images. (c) Results of DALE. (d) Results of Zero-DCE.

4. Related Links

Paper: https://link.springer.com/chapter/10.1007/978-3-030-88010-1\_19

Code implementation based on MindSpore: https://gitee.com/mindspore/contrib/tree/master/papers/LIE-IQA

5. Technical Highlights of the Algorithm Framework

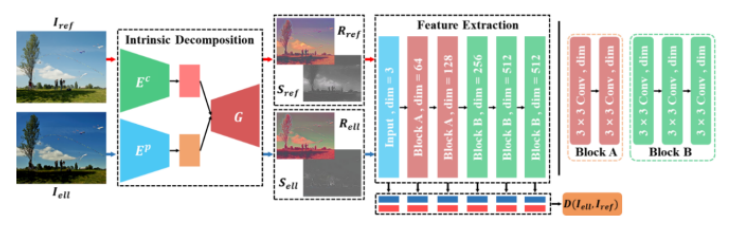

Figure 2. The framework of the illumination-aware image quality assessment method (LIE-IQA).

The LIE-IQA framework consists of two main modules, the intrinsic decomposition module and feature extraction module.

(1) The intrinsic decomposition module is an encoder-decoder structure with some constraint conditions. This structure is utilized to decompose the reference image (Ground-Truth) I__ref and the enhanced low-light image I__ell into reflectance and shading images.

(2) The feature extraction module is a VGG-based convolutional network consisting of two main blocks (Block A and B) with different numbers of convolutional filters and dimensions.

(3) The learnable perceptual distance measurement D(I__ell_, I__ref__)_ is the weighted similarity of the extracted feature between I__ell and I__ref , which can be used as a regularization in a loss function of an end-to-end network of an LIE algorithm.

The comparison results demonstrate that the LIE-IQA method has superior performance on the LIE-IQA dataset compared to other IQA methods. Even though the LIE-IQA method is designed specifically for the IQA of LIE, it is superior to other methods on standard IQA databases, including the CSIQ, MDID, and LIVE datasets. In addition, better perceptual quality can be obtained using an LIE algorithm optimized by LIE-IQA.

6. Experiments

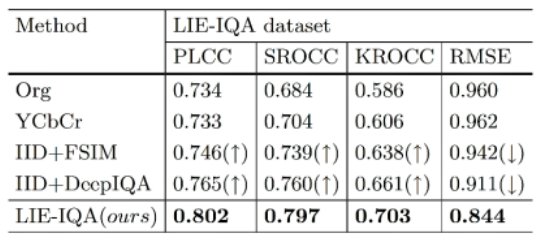

1) Ablation experiments. The effectiveness of the intrinsic decomposition module in LIE-IQA is verified via ablation experiments. In the ablation experiments, the team removed the intrinsic decomposition module and replaced it with image color space conversion (RGB to YCbCr), and compared the performance on low-light image enhancement quality assessment. On the contrary, the team added the intrinsic decomposition module to the natural image quality assessment models (for example, Deep-IQA and FSIM) to explore the contribution of the intrinsic decomposition module. The results, shown in Table 1, prove that the intrinsic decomposition module can effectively improve the performance of the low-light image enhancement quality assessment.

Table 1. Experiment to explore intrinsic decomposition module contribution. Larger PLCC, SROCC, KROCC, and smaller RMSE values indicate better performance.

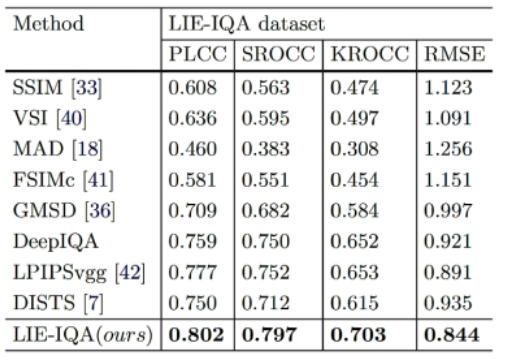

2) Performance comparison on standard IQA datasets. The team compared the performance between LIE-IQA and a set of full-reference IQA methods, including SSIM, VSI, MAD, FSIMc, GMSD, DeepIQA, LPIPS and DISTS on different datasets (CSIQ, MDID, and LIVE). The quantitative results, shown in Table 2, demonstrate the superiority of LIE-IQA over other methods and the potential for more general IQA tasks.

Table 2. Performance comparison on standard IQA datasets.

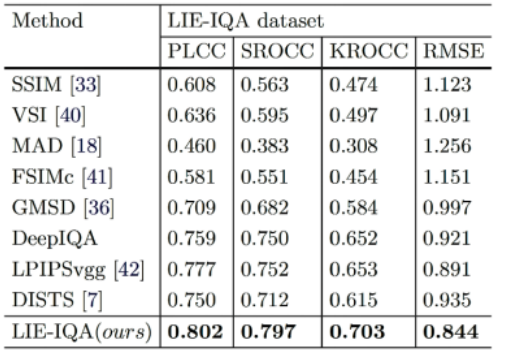

3) Performance comparison on low-light image enhancement quality assessment test datasets. The team verified the proposed method for low-light image enhancement quality prediction on the LIE-IQA dataset. The comparison results are shown in Table 3, demonstrating that the LIE-IQA method has the best performance on the low-light image enhancement quality assessment compared to other natural full reference (FR) IQA methods.

Table 3. Performance comparison on low-light image enhancement quality assessment test dataset.

4) LIE-IQA for low-light enhancement. Since the ultimate goal of LIE-IQA is low-light enhancement, the team also used the algorithm as a regularization in a loss function and optimized an existing end-to-end LIE method. The results show that better perceptual quality can be obtained after optimization, as shown in Figure 3.

Figure 3. Images generated by optimized low-light enhancement algorithm with LIE-IQA scores.

7. MindSpore Code Implementation

The MindSpore implementation environment is as follows:

(1) Python 3.7.5

(2) MindSpore 1.2.1

(3) CUDA 10.1

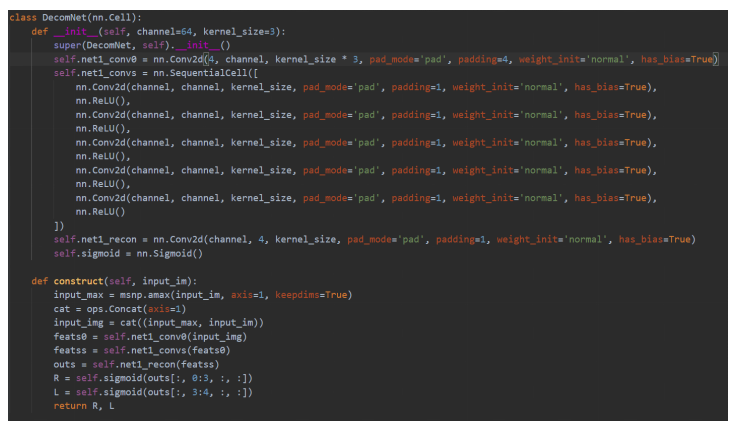

Note that the intrinsic image decomposition module is implemented based on Retinex theory. The performance of Retinex-based LIE-IQA is almost the same as that in the paper, But the Retinex-based image decomposition module is easier to code. It features only simple convolution modules, fewer model parameters, lower training difficulty, and consumes fewer training resources, as shown in Figure 4.

Figure 4. Code of the Retinex-based image decomposition module.

8. Conclusion

The low-light enhancement quality assessment proposed in this paper shows superiority in quality prediction of enhanced low-light images. The method can also effectively optimize the low-light enhancement algorithms and improve the enhancement quality of low-light images. In the future, the team will expand the IQA-based optimization framework and use it for more image enhancement tasks.