Gold-YOLO with Innovative Gather-and-Distribute Mechanism Has Been Open Sourced in the MindSpore Community

Gold-YOLO with Innovative Gather-and-Distribute Mechanism Has Been Open Sourced in the MindSpore Community

Author: Wang Yunhe Source: Zhihu

YOLO models, which have been in existence for eight years, now serve as the benchmark in object detection thanks to their outstanding performance. After been updated and iterated for more than ten times, these models have achieved stability and comprehensive capabilities. Researchers are now focusing more on fine-tuning the structure within a single computing module or enhancing head and training methods. However, despite these efforts, achieving flawless models remains elusive.

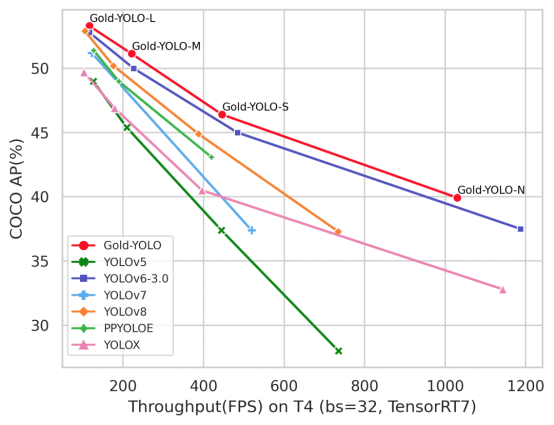

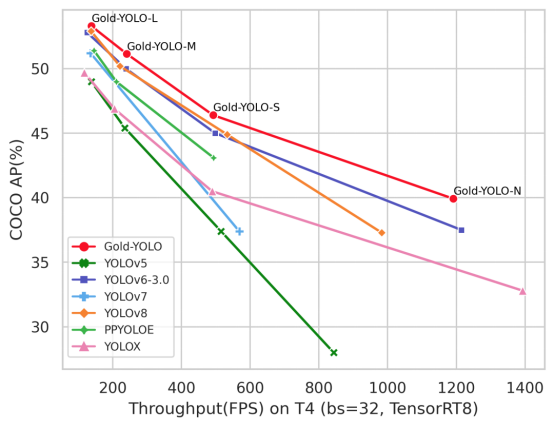

At present, YOLO models usually adopt a method similar to the feature pyramid network (FPN) for information fusion, but this structure leads to information loss during cross-layer information fusion. To solve this problem, Huawei Noah's Ark Laboratory (hereinafter referred to as Huawei) proposes a novel gather-and-distribute (GD) mechanism. This mechanism aggregates and fuses features at different levels from a global perspective, distributes and injects the features to different levels, and builds a more efficient information interaction and fusion mechanism. Gold-YOLO is constructed based on such mechanism. In the COCO dataset, Gold-YOLO has already outperformed previous YOLO models, achieving state-of-the-art (SOTA) efficiency on the accuracy-speed curve.

Accuracy-speed curve (TensorRT7)

Accuracy-speed curve (TensorRT8)

For details about the related paper, visit https://arxiv.org/abs/2309.1133. And for details about the MindSpore code, visit https://gitee.com/mindspore/models/tree/master/research/cv/Gold_YOLO.

Problems of Conventional YOLO Models

In detection models, a sequence of features is typically extracted from the backbone network at various levels. The FPN leverages these features to create a fusion structure. Features of different levels encode location information for objects of varying sizes. Despite their distinct content, once integrated, they complement each other, resulting in enhanced information enrichment at every level and ultimately improving network performance.

In the original FPN structure, adjacent layers effectively integrate information through a layer-by-layer fusion mode. However, cross-layer information fusion poses challenges. When exchanging and integrating information across layers, the intermediate layer is required due to the absence of a direct interaction path, which leads to information loss. Developers have paid much attention to solve this problem by adding more paths through shortcuts to enhance information flow.

However, even if the conventional FPN structure is improved, the FPN-based information fusion mode still faces challenges in cross-layer information interaction and information loss due to a large number of paths in the network and indirect interaction mode.

Gold-YOLO: New Information Exchange and Fusion Mechanism

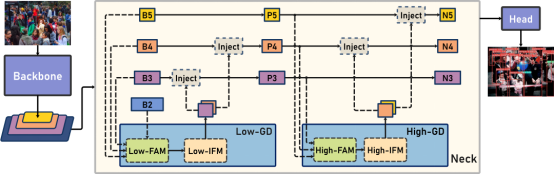

Gold-YOLO architecture

To address the challenges, Huawei proposes the GD mechanism for information exchange and fusion. This mechanism implements efficient information exchange and fusion by globally fusing multi-level features and injecting the obtained global information into higher levels. This greatly enhances the information fusion capability of the neck without significantly increasing the latency, thereby improving the model's detection capability on varying object sizes.

The GD mechanism is implemented through three modules: feature alignment module (FAM), information fusion module (IFM), and information injection module (Inject).

· The FAM collects and aligns features from various levels.

· The IFM fuses the aligned features to generate global information by using convolutions or Transformer operators.

· The Inject is responsible for injecting global information into different levels.

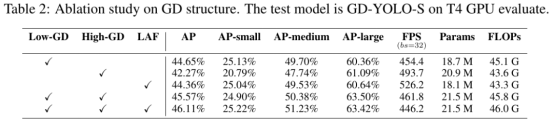

In Gold-YOLO, two GD branches are constructed to fuse information based on the model's requirements for detecting objects of different sizes and balancing accuracy and speed: low-stage gather-and-distribute branch (Low-GD) and high-stage gather-and-distribute branch (High-GD). These branches extract and fuse features based on convolutions or Transformer operators, respectively.

In addition, to promote the flow of local information, Huawei builds a lightweight adjacent layer fusion (LAF) module to combine the features of adjacent layers on the local scale, further improving the model performance. A pre-training method is also introduced to verify the effectiveness of YOLO models by pre-training the backbone on ImageNet 1K using the MAE method, which significantly improves the convergence speed and accuracy of the model.

Experimental Results

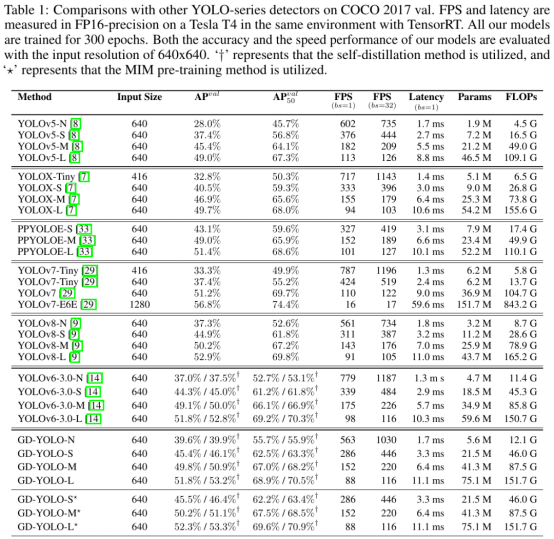

The model's accuracy is tested on the COCO dataset and the speed is tested by Tesla T4+TensorRT 7. The results show that Gold-YOLO has achieved SOTA performance on the accuracy-speed curve.

At the same time, an ablation experiment is performed, considering the impacts on model accuracy and speed caused by the different branches and structures of Gold-YOLO.

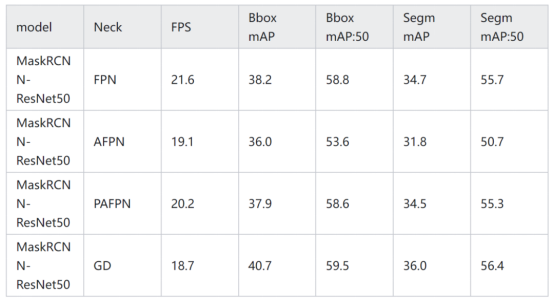

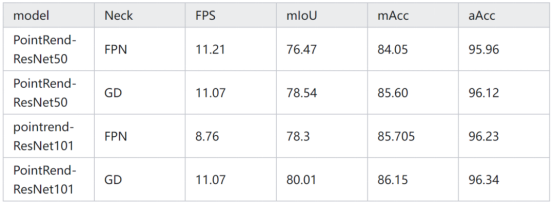

To verify the robustness of the GD mechanism in different models and tasks, the following experiments are also conducted.

· Instance Segmentation Task

Necks in Mask R-CNN are replaced with GD, and training and testing are performed on the COCO instance datasets.

· Semantic Segmentation Task

Necks in PointRend are replaced with GD, and training and testing are performed on the Cityscapes datasets.

· Object Detection Model

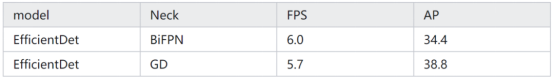

Necks in EfficientDet are replaced with GD, and training and testing are performed on the COCO datasets.