MindSpore's Annual Recap in 2023

MindSpore's Annual Recap in 2023

2023 was an incredible year for MindSpore.

Having being committed to enabling a vast range of models and applications, MindSpore has obtained 6.57+ million downloads while the MindSpore LLM Platform has gained 570,000+ downloads till December 2023. Thanks to the contributions of over 25,000 developers, MindSpore now serves 5,500+ enterprises that deliver more than 40% of industry-specific models in China.

To further grow the MindSpore community, we set up the MindSpore Community Committee, and 18 organizations signed up to contribute to the community's development.

Technical progress

Scientific papers

By 2023, more than 100 outstanding research teams have partnered with MindSpore and published over 1,000 papers centering on AI. MindSpore is becoming increasingly recognized by the industry.

Version updates

MindSpore launched two full versions in the last year, together with 328 robust features. More than 5,000 developers from 155 countries and regions contributed to our version updates. We are grateful for your support!

For details, please refer to Version Dynamics.

Easy-to-use kits

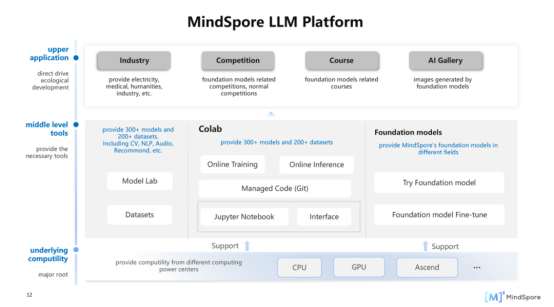

MindSpore has rolled out a plethora of kits for developing LLMs, implementing scientific computing, and building capabilities in base domains. By integrating relevant APIs or applying provided models, you can fully unleash AI-driven innovation.

Curious for more? Explore our kit repositories on the official website.

AI for science innovation

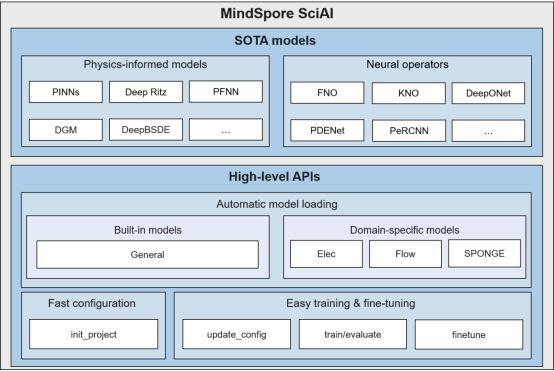

MindSpore SciAI is an AI for science model library that supports a wide range of models. Its 0.1 version integrates 60+ SOTA models, covering both physics-informed models like PINNs and PFNN and neural operators like FNO and KNO. The library also delivers OOTB APIs for automatic model loading, fast environment configuration, and easy training and fine-tuning.

MindSpore SciAI is applicable to many fields, including fluidics, electromagnetism, acoustics, thermal physics, solid-state physics, and biology.

Wanna know more? Download MindSpore SciAI on Gitee to try it out.

Case study

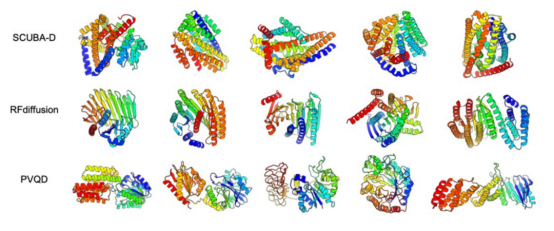

MindSpore-based PVQD model

In November 2023, Professor Liu Haiyan from University of Science and Technology of China, together with Oristruct Biotech, published their research results about the protein vector quantization and diffusion model based on MindSpore.

The PVDQ model used an auto-encoder with vector quantization and a generative diffusion model in the latent space to model complicated protein structures within an E2E framework. This breakthrough in protein structure prediction, integrating AI and biocomputing technologies, enhances drug target discovery and advances the biopharmaceutical industry.

This project demonstrates a paradigm of industry-education-research cooperation, and MindSpore will continue to accelerate innovation in basic disciplines throughout the following year.

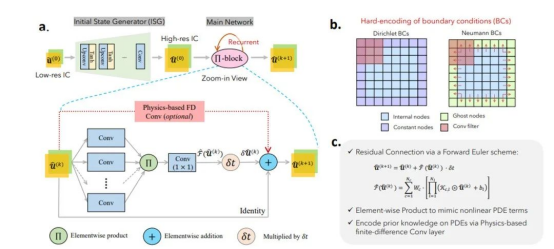

MindSpore-based PeRCNN

The team mainly from the Gaoling School of Artificial Intelligence at Renmin University of China, led by Professor Sunhao, proposed PeRCNN, which marked another successful use case of MindSpore. Their achievement has been published in Nature Machine Intelligence and relevant code has been open-sourced in the MindSpore Flow code repository on Gitee.

PeRCNN, which stands for Physics-encoded Recurrent Convolutional Neural Network, outperforms physics-informed neural networks (PINNs), ConvLSTM, and PDE-NET in model generalization and noise resistance, and improves the long-term inference precision by more than 10 times. PeRCNN has great application potential in fields like aerospace, ship manufacturing, and weather forecast.

For details, please refer to PeRCNN: A New AI + Scientific Computing Achievement Published in Nature.

CodeGeeX

CodeGeeX is a powerful programming assistant based on LLMs. It boasts functions such as code generation/completion, comment generation, code translation, and AI chat, helping developers significantly improve their work efficiency.

When the CodeGeeX project commenced in April 2022, it was built on the MindSpore 1.7 framework. During the development process, the CodeGeeX team partnered with the MindSpore team to optimize the framework and accelerated data training. For example, they implemented multiple techniques to make their calculations faster, including element-wise operator fusion, layer normalization operator fusion, fast GELU activation, and matrix multiplication optimization.

This cooperation demonstrates the robust competitiveness of the MindSpore framework and the professional support of the MindSpore team. And heads up, guys! MindSpore has now upgraded to version 2.2. So go ahead and give it a try!

Community highlights

Cooperation

Till now, the MindSpore community has collaborated with many open source projects (ONNX, Jina AI, and more), foundations (The Linux Foundation, OpenInfra Foundation, CNCF, and more), and organizations (CSDN, OSCHINA, BAAI, and more). In 2023, we established a featured area for showcasing our model code to empower more developers on the Hugging Face community. Let's discover the power and beauty of open source together.

Events & Awards

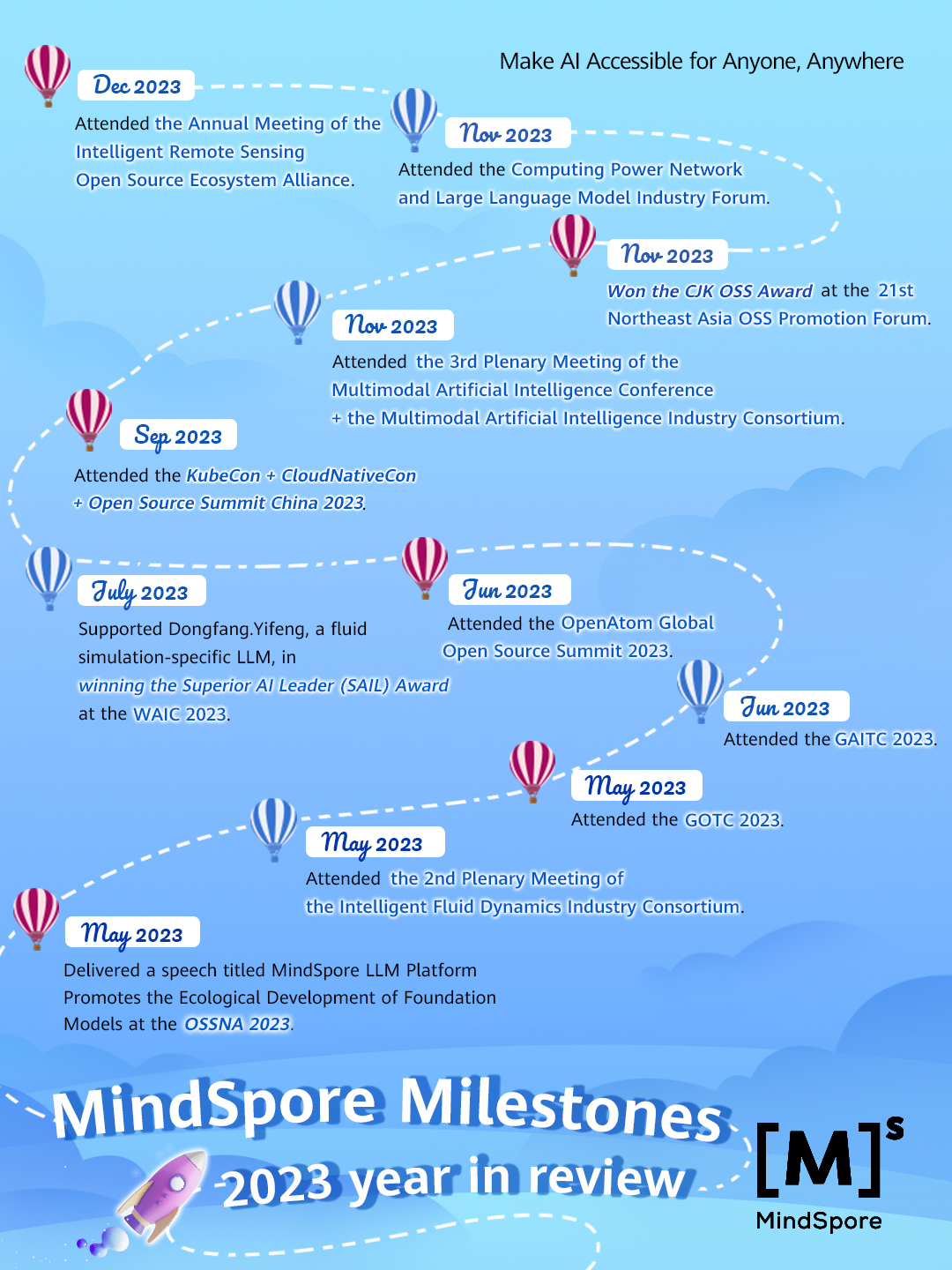

In this last year we've been busy traveling the world and showcasing our amazing work J.

The MindSpore community participated in various events, from forums and summits to meetings and conferences, where we met with experts and enthusiasts from different fields and backgrounds and exchanged ideas about AI, open source software, intelligent fluid dynamics and even more fields. At the Open Source Summit North America 2023, hosted by the Linux Foundation in Vancouver, Canada, our algorithm engineer Zhong Yuanke delivered a speech themed on the development of the foundation model ecosystem promoted by MindSpore LLM Platform and further expanded the influence of MindSpore in the open source industry.

Not only that, we were proud to announce that we had supported our partner Dongfang.Yifeng, a fluid simulation-specific LLM, in grabbing the Superior AI Leader (SAIL) Award at the World Artificial Intelligence Conference 2023 in July. Moreover, we were the recipient of the prestigious CJK OSS Award at the 21st Northeast Asia OSS Promotion Forum in November.

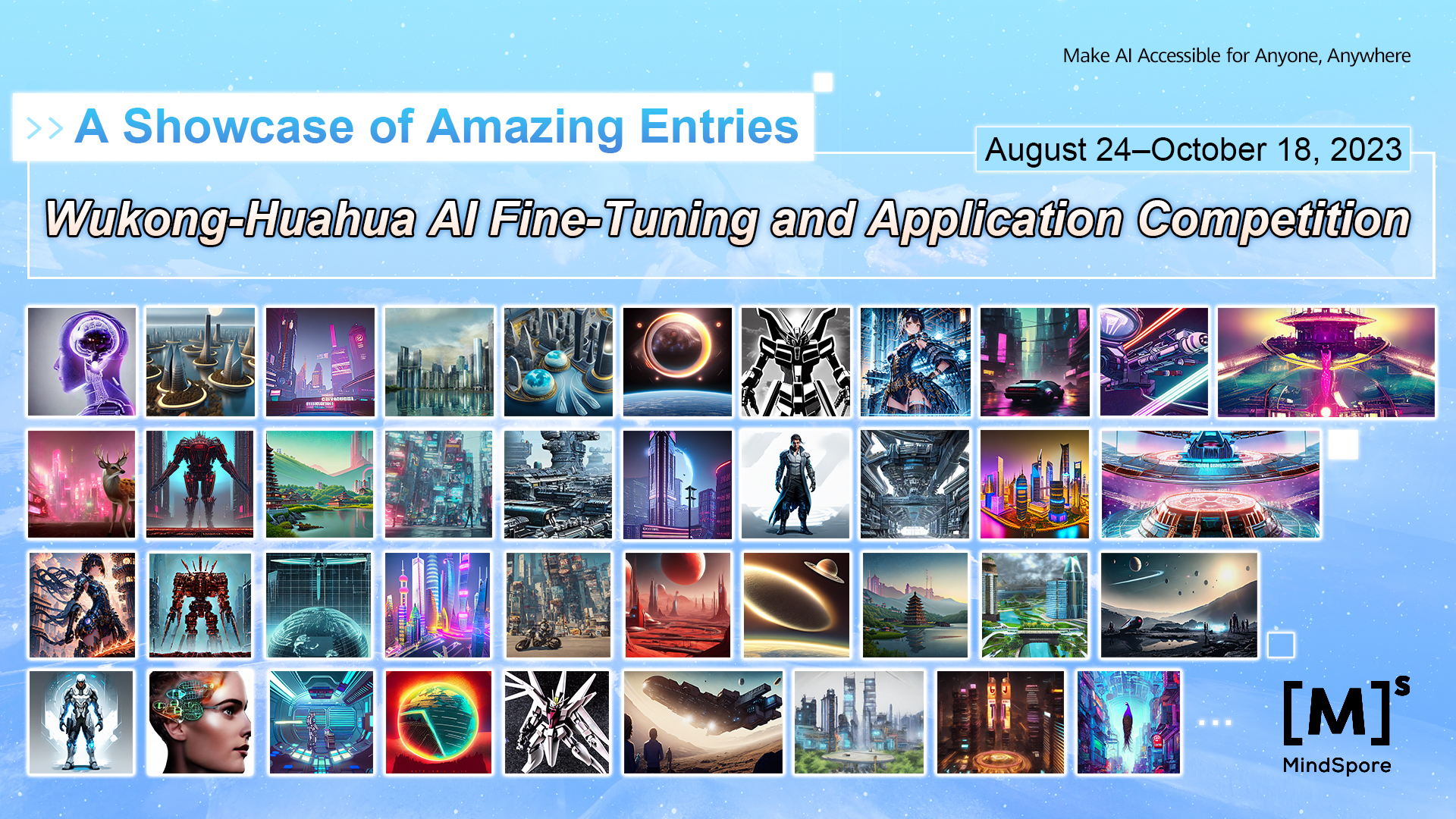

To unleash AI imagination, the MindSpore community also hosted the Wukong-Huahua AI Fine-Tuning and Application Competition from August 24 to October 18 in 2023. Wukong-Huahua is a diffusion model-based LLM, powered by MindSpore, that boasts excellent AI image generation capabilities together with powerful graphics. Participating teams could develop innovative apps based on the model API or deliver remarkable AI drawings after fine-tuning the model. We received a total of 263 entries for this competition and 31 entries, including LoRA/DreamBooth fine-tuned models and applications, entered the defense stage. Let's celebrate the impressive works of our participants, who have shown their incredible imagination in the competition.

We are pleased and honored by these accomplishments and will continue to help our partners build awesome things, improve technology, and unleash creativity in the open source community and beyond.

SIGs

We have established more than 30 special interest groups (SIGs) to nurture open communication among experts, developers, professors, and students in relevant fields. These SIGs allow members to strengthen their skills and influence through technical discussions, projects, and meetups.

We can't wait for you to join us!

Future outlook

We are working hard to optimize MindSpore for AI innovation and application in 2024. By focusing on foundation models, AI for science, generative AI, and security and trustworthiness, we hope to provide services that our partners and users will love.

Your support and loyalty are valuable to us as we constantly improve the future-proof AI technology. MindSpore is all about enabling a vast range of models and applications for everyone.

About MindSpore

MindSpore is an open source AI framework that supports multi-processor architectures for diverse scenarios. It brings together data scientists, algorithm engineers, and developers, helping them with easy development, efficient running, and flexible deployment to fully unleash hardware potential.

For more information, feel free to visit our documentation:

Official website: https://www.mindspore.cn/en

Gitee: https://gitee.com/mindspore/mindspore/blob/master/README.md