MindSpore SciAI 0.1 Released to Accelerate the Development of AI4Science

MindSpore SciAI 0.1 Released to Accelerate the Development of AI4Science

On the afternoon of September 21, 2023, at the MindSpore forum of HUAWEI CONNECT 2023 with the theme of "Accelerate Intelligence", the MindSpore open-source community officially released the MindSpore-based AI4Science most-frequently-used model suite—MindSpore SciAI.

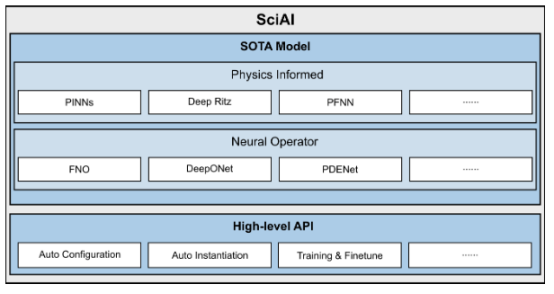

MindSpore has kept developing toolkits for the AI4Science field, with a focus on trending topics and pain points in the industry, aiming to accelerate the development of AI4Science with its excellent performance and innovation capabilities. As an efficient and easy-to-use AI4Science suite, MindSpore SciAI 0.1 has more than 60 built-in most-frequently-used SOTA models, covering physical informed models (such as PINNs, DeepRitz, and PFNN) and neural operators (such as FNO, DeepONet, and PDENet) and ranking No.1 in terms of model coverage in the world. It also provides various high-level APIs (such as one-click environment configuration, automatic model loading, and simplified training fine-tuning) for developers and users out of the box.

Figure 1. Architecture of MindSpore SciAI

1. SOTA Models

1.1. Physical Informed Models

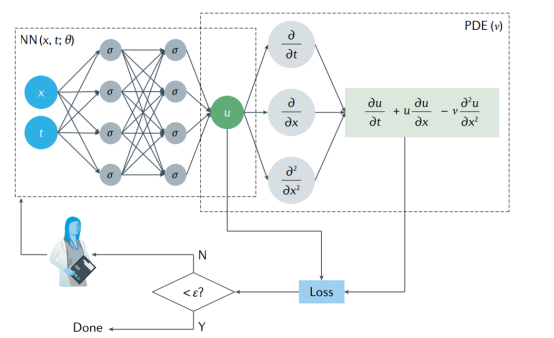

A physical informed model is a type of models that integrate prior knowledge (such as equations/initial boundary conditions) in physics into neural networks. Typical models include PINNs (as shown in figure 2), Deep Ritz, PFNN, and the like. An advantage of these models is that no discrete grid is generated. Derivative calculation can be performed based on the automatic differentiation capability of the AI framework to avoid numerical differential discrete errors. This type of model is naturally applicable to inverse problems and data assimilation problems. Compared with data-driven models, this type of model has stronger extrapolation capability and fewer samples. However, the lack of network structure design guidance makes it difficult to learn singularity problems. Loss functions contain multiple constraints, making training difficult to converge. Retraining is required when physical constraints change, lacking generalization. Calculation accuracy and convergence lack theoretical assurance.

To solve the preceding problems, the academia and MindSpore propose a series of improvement schemes, such as adaptive activation function, time & space decomposition, multi-scale optimization, and adaptive weighting. MindSpore SciAI has built-in SOTA physical informed models which are applicable to domains such as fluid, electromagnetic, sound, heat, and solid.

Figure 2. Overview of the physical informed model—PINNs

1.2. Neural Operator Models

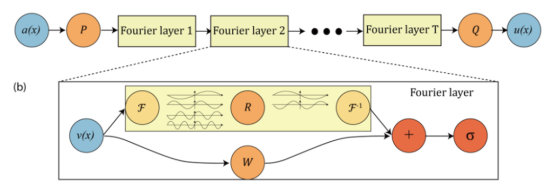

Physical informed models such as PINNs mainly solve specific equations, while neural operator models can learn the mapping of infinite dimension function space and solve the entire PDE family at a time. Typical examples include FNO (as shown in figure 3), DeepONet, PDENet, and the like. FNO mainly uses the properties of Fourier transform to learn the mapping between functions in Fourier space, and then converts the result back to physical space. DeepONet learns the mapping between functions through the branch net and trunk net. A neural operator model has good performance in fluid and meteorology domains. Therefore, MindSpore SciAI also has built-in SOTA neural operator models.

Figure 3. Overview of the neural operator model—FNO

2. Summary and Outlook

After releasing MindSpore SciAI 0.1, we hope to collaborate with more enterprises and research institutes to build more innovative AI4Science suites based on Ascend technologies, so as to accelerate the prosperity of the AI industry and ecosystem.

Code repository address:

https://gitee.com/mindspore/mindscience/tree/master/SciAI

Appendixes

Appendix 1: Modules and Functions

This section describes advanced and basic APIs of MindSpore SciAI. The MindSpore SciAI directory structure is as follows:

├── SciAI

│ ├── cmake # Compilation script

│ ├── docs # Documents

│ │ ├── FAQ # FAQs

│ ├── sciai # Main directory of MindSpore SciAI

│ │ ├── architecture # Basic neural network module

│ │ ├── common # Common modules

│ │ ├── context # Context settings

│ │ ├── model # **AI4Sci most-frequently-used model**

│ │ ├── operators # High-order differentiation

│ │ └── utils # Other auxiliary functions

│ └── tutorial # Teaching model

1.1 Advanced APIs

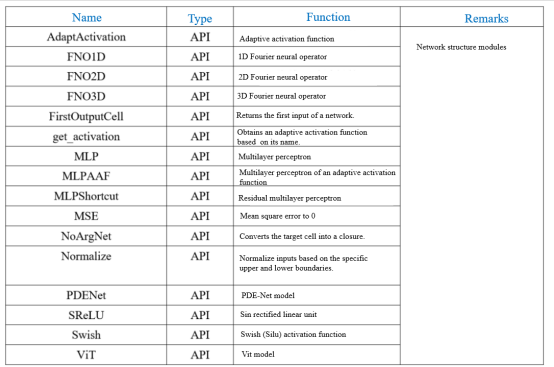

1.2. Basic APIs

1.2.1 Basic APIs

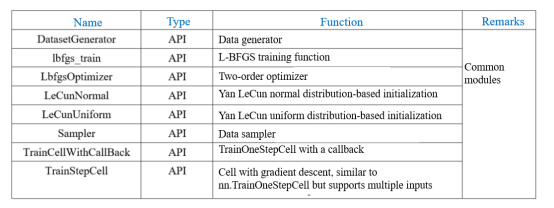

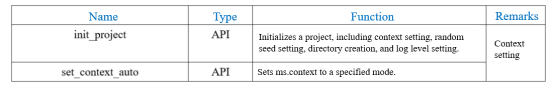

1.2.2 Common Modules

1.2.3 Context Settings

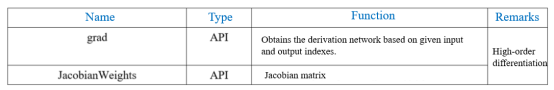

1.2.4 High-order Differentiation Functions

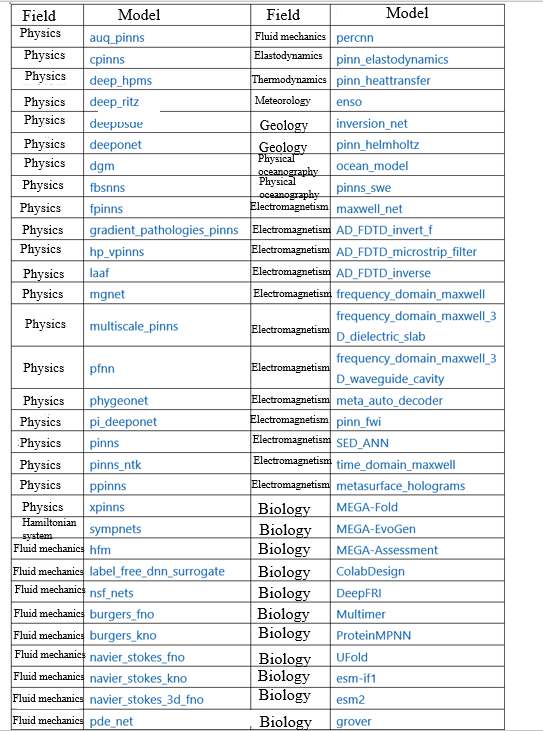

Appendix 2: Network Model Libraries

MindSpore SciAI provides various most-frequently-used AI4Science models. The following figure shows the implemented network models and applicable fields.

Appendix 3: Usage

MindSpore SciAI provides two model training and evaluation modes with flexible and simple APIs.

3.1. Advanced APIs (Recommended)

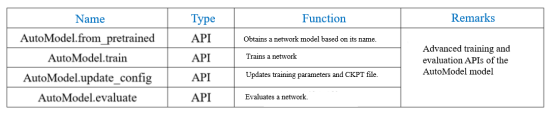

3.1.1 Using AutoModel to Train and Fine-tune Models

Obtain the supported network models through the AutoModel.from_pretrained API. Before using AutoModel.train to train a model, use AutoModel.update_config to adjust training parameters or load the .ckpt file to fine-tune the model. The optional parameters of AutoModel.update_config depend on the model type. For details, see "MindSpore-based Implementation and Network Parameters" in the network model library.

from sciai.model import AutoModel

# Obtain a CPINNs model.

model = AutoModel.from_pretrained("cpinns")

# Use the default parameters to train the network. The generated images, data, and logs are saved to the execution directory of the user.

model.train()

# Alternatively, load the .ckpt file.

model.update_config(load_ckpt=True, load_ckpt_path="./checkpoints/your_file.ckpt", epochs=500)

# Continue to train the model based on the newly loaded parameters for fine-tuning.

model.train()

3.1.2 Using AutoModel to Evaluate a Model

You can use AutoModel.evaluate to evaluate the training result. This API loads the .ckpt file provided in the SciAI model library for evaluation by default. You can also call the model.update_config API to customize the file to be loaded.

from sciai.model import AutoModel

# Obtain a CPINNs model.

model = AutoModel.from_pretrained("cpinns")

# Load the default .ckpt file of the network and evaluate the model.

model.evaluate()

# Load the customized .ckpt file.

model.update_config(load_ckpt=True, load_ckpt_path="./checkpoints/your_file.ckpt")

# Evaluate the network model.

model.evaluate()

3.1.3 Restoring the Parameter File and Dataset If the automatically downloaded model parameter .ckpt file or dataset is deleted by mistake, you can run the following command to update the parameters and forcibly download the file again: model.update_config(force_download=True) 3.2. Script APIs 3.2.1 Preparing the Environment Run the following command to clone the entire repository and prepare environment variables. Run the .env file to add the SciAI project directory to the environment variable PYTHONPATH. git clone https://gitee.com/mindspore/mindscience source ./mindscience/SciAI/.env Then, use the source code for training and inference by referring to "Quick Start" of each README file in the network model library. 3.2.2 Training the Network Model Use the training script train.py to train the network model. cd ./mindscience/SciAI/sciai/model/cpinns/ python ./train.py [--parameters] 3.2.3 Loading the .ckpt File to Fine-tune the Network Model python ./train.py --load_ckpt true --load_ckpt_path {your_ckpt_file.ckpt} [--parameters] 3.2.4 Evaluating the Network Model Use the eval.py script to evaluate the trained network model. python ./eval.py [--parameters]

[--parameters] includes learning rate, training period, data reading and saving path, and checkpoints file loading path. For details, see "MindSpore-based Implementation and Network Parameters" in the network model library.

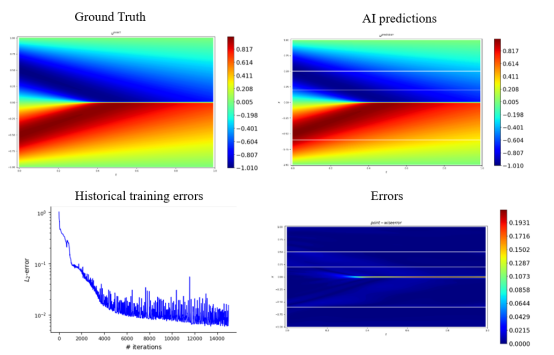

3.2.5 Checking the CPINNs Computing Results

References

[1] https://gitee.com/mindspore/mindscience/tree/master.

[2] https://gitee.com/mindspore/mindscience/tree/master/SciAI

[3] Raissi M, Perdikaris P, Karniadakis G E. Physics informed deep learning (part i): Data-driven solutions of nonlinear partial differential equations[J]. arXiv preprint arXiv:1711.10561, 2017.

[4] Yu B. The deep Ritz method: a deep learning-based numerical algorithm for solving variational problems[J]. Communications in Mathematics and Statistics, 2018, 6(1): 1-12.

[5] Sheng H, Yang C. PFNN: A penalty-free neural network method for solving a class of second-order boundary-value problems on complex geometries[J]. Journal of Computational Physics, 2021, 428: 110085.

[6] Li Z, Kovachki N, Azizzadenesheli K, et al. Fourier neural operator for parametric partial differential equations[J]. arXiv preprint arXiv:2010.08895, 2020.

[7] Lu L, Jin P, Karniadakis G E. Deeponet: Learning nonlinear operators for identifying differential equations based on the universal approximation theorem of operators[J]. arXiv preprint arXiv:1910.03193, 2019.

[8] Long Z, Lu Y, Ma X, et al. Pde-net: Learning pdes from data[C]//International conference on machine learning. PMLR, 2018: 3208-3216.