mindspore.ops.leaky_relu

- mindspore.ops.leaky_relu(input, alpha=0.2)[source]

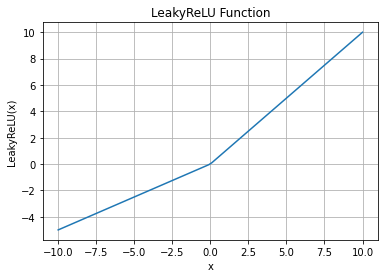

leaky_relu activation function. The element of input that is less than 0 is multiplied by alpha .

Warning

After version 2.9.0, the parameter alpha will be renamed to negative_slope, and the default value will change from

0.2to0.01.The activation function is defined as:

\[\text{leaky_relu}(input) = \begin{cases}input, &\text{if } input \geq 0; \cr {\alpha} * input, &\text{otherwise.}\end{cases}\]where \(\alpha\) represents the alpha parameter.

For more details, see Rectifier Nonlinearities Improve Neural Network Acoustic Models.

LeakyReLU Activation Function Graph:

- Parameters

- Returns

Tensor, has the same type and shape as the input.

- Raises

- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> import numpy as np >>> from mindspore import Tensor, ops >>> x = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> print(ops.leaky_relu(x, alpha=0.2)) [[-0.2 4. -1.6] [ 2. -1. 9. ]]